Hadoop is an open-source software framework for storing data and running applications on clusters of commodity hardware. It provides massive storage for any kind of data, enormous processing power and the ability to handle virtually limitless concurrent tasks or jobs. Apache Hadoop is used mainly for Data Analysis.

Apache Spark is an open-source distributed general-purpose cluster-computing framework. Spark provides an interface for programming entire clusters with implicit data parallelism and fault tolerance.

The question is Which programming language is good to drive Hadoop and Spark?

The programming model for developing hadoop based applications is the map reduce. In other words, MapReduce is the processing layer of Hadoop.

MapReduce programming model is designed for processing large volumes of data in parallel by dividing the work into a set of independent tasks. Hadoop MapReduce is a software framework for easily writing an application that processes the vast amount of structured and unstructured data stored in the Hadoop Distributed FileSystem (HDFS). The biggest advantage of map reduce is to make data processing on multiple computing nodes easy. Under the Map reduce model, data processing primitives are called Mapper and Reducers.

Spark is written in Scala and Hadoop is written in Java.

The key difference between Hadoop MapReduce and Spark lies in the approach to processing: Spark can do it in-memory, while Hadoop MapReduce has to read from and write to a disk. As a result, the speed of processing differs significantly – Spark may be up to 100 times faster.

In-memory processing is faster when compared to Hadoop, as there is no time spent in moving data/processes in and out of the disk. Spark is 100 times faster than MapReduce as everything is done here in memory.

Spark’s hardware is more expensive than Hadoop MapReduce because it’s hardware needs a lot of RAM.

Hadoop runs on Linux, it means that you must have knowldge of linux.

Java is important for hadoop because:

- There are some advanced features that are only available via the Java API.

- The ability to go deep into the Hadoop coding and figure out what’s going wrong.

In both these situations, Java becomes very important.

As a developer, you can enjoy many advanced features of Spark and Hadoop if you start with their native languages (Java and Scala).

What Python Offers for Hadoop and Spark?

- Simple syntax– Python offers simple syntax which shows it is more user friendly than other two languages.

- Easy to learn – Python syntax are like English languages. So, it much more easier to learn it and master it.

- Large community support – Unlike Scala, Python has huge community (active), which we will help you to solve your queries.

- Offers Libraries, frameworks and packages – Python has huge number of Scientific packages, libraries and framework, which are helping you to work in any environment of Hadoop and Spark.

- Python Compatibility with Hadoop – A package called PyDoop offers access to the HDFS API for Hadoop and hence it allows to write Hadoop MapReduce program and application.

- Hadoop is based off of Java (then so e.g. non-Hadoop yet still a Big-Data technology like the ElasticSearch engine, too – even though it processes JSON REST requests)

- Spark is created off of Scala although pySpark (the lovechild of Python and Spark technologies of course) has gained a lot of momentum as of late.

If you are planning for Hadoop Data Analyst, Python is preferable given that it has many libraries to perform advanced analytics and also you can use Spark to perform advanced analytics and implement machine learning techniques using pyspark API.

The key Value pair is the record entity that MapReduce job receives for execution. In MapReduce process, before passing the data to the mapper, data should be first converted into key-value pairs as mapper only understands key-value pairs of data.

key-value pairs in Hadoop MapReduce is generated as follows:

Advertise with us - Post Your Good Content Here

We are ranked in the Top 20 on Google

AI Dashboard is available on the Web, Apple, Google, and Microsoft, PRO version

Resources:

1- Quora

2- Wikipedia

3- Data Flair

Active Hydrating Toner, Anti-Aging Replenishing Advanced Face Moisturizer, with Vitamins A, C, E & Natural Botanicals to Promote Skin Balance & Collagen Production, 6.7 Fl Oz

Age Defying 0.3% Retinol Serum, Anti-Aging Dark Spot Remover for Face, Fine Lines & Wrinkle Pore Minimizer, with Vitamin E & Natural Botanicals

Firming Moisturizer, Advanced Hydrating Facial Replenishing Cream, with Hyaluronic Acid, Resveratrol & Natural Botanicals to Restore Skin's Strength, Radiance, and Resilience, 1.75 Oz

Skin Stem Cell Serum

Smartphone 101 - Pick a smartphone for me - android or iOS - Apple iPhone or Samsung Galaxy or Huawei or Xaomi or Google Pixel

Can AI Really Predict Lottery Results? We Asked an Expert.

Djamgatech

Read Photos and PDFs Aloud for me iOS

Read Photos and PDFs Aloud for me android

Read Photos and PDFs Aloud For me Windows 10/11

Read Photos and PDFs Aloud For Amazon

Get 20% off Google Workspace (Google Meet) Business Plan (AMERICAS): M9HNXHX3WC9H7YE (Email us for more)

Get 20% off Google Google Workspace (Google Meet) Standard Plan with the following codes: 96DRHDRA9J7GTN6(Email us for more)

FREE 10000+ Quiz Trivia and and Brain Teasers for All Topics including Cloud Computing, General Knowledge, History, Television, Music, Art, Science, Movies, Films, US History, Soccer Football, World Cup, Data Science, Machine Learning, Geography, etc....

List of Freely available programming books - What is the single most influential book every Programmers should read

- Bjarne Stroustrup - The C++ Programming Language

- Brian W. Kernighan, Rob Pike - The Practice of Programming

- Donald Knuth - The Art of Computer Programming

- Ellen Ullman - Close to the Machine

- Ellis Horowitz - Fundamentals of Computer Algorithms

- Eric Raymond - The Art of Unix Programming

- Gerald M. Weinberg - The Psychology of Computer Programming

- James Gosling - The Java Programming Language

- Joel Spolsky - The Best Software Writing I

- Keith Curtis - After the Software Wars

- Richard M. Stallman - Free Software, Free Society

- Richard P. Gabriel - Patterns of Software

- Richard P. Gabriel - Innovation Happens Elsewhere

- Code Complete (2nd edition) by Steve McConnell

- The Pragmatic Programmer

- Structure and Interpretation of Computer Programs

- The C Programming Language by Kernighan and Ritchie

- Introduction to Algorithms by Cormen, Leiserson, Rivest & Stein

- Design Patterns by the Gang of Four

- Refactoring: Improving the Design of Existing Code

- The Mythical Man Month

- The Art of Computer Programming by Donald Knuth

- Compilers: Principles, Techniques and Tools by Alfred V. Aho, Ravi Sethi and Jeffrey D. Ullman

- Gödel, Escher, Bach by Douglas Hofstadter

- Clean Code: A Handbook of Agile Software Craftsmanship by Robert C. Martin

- Effective C++

- More Effective C++

- CODE by Charles Petzold

- Programming Pearls by Jon Bentley

- Working Effectively with Legacy Code by Michael C. Feathers

- Peopleware by Demarco and Lister

- Coders at Work by Peter Seibel

- Surely You're Joking, Mr. Feynman!

- Effective Java 2nd edition

- Patterns of Enterprise Application Architecture by Martin Fowler

- The Little Schemer

- The Seasoned Schemer

- Why's (Poignant) Guide to Ruby

- The Inmates Are Running The Asylum: Why High Tech Products Drive Us Crazy and How to Restore the Sanity

- The Art of Unix Programming

- Test-Driven Development: By Example by Kent Beck

- Practices of an Agile Developer

- Don't Make Me Think

- Agile Software Development, Principles, Patterns, and Practices by Robert C. Martin

- Domain Driven Designs by Eric Evans

- The Design of Everyday Things by Donald Norman

- Modern C++ Design by Andrei Alexandrescu

- Best Software Writing I by Joel Spolsky

- The Practice of Programming by Kernighan and Pike

- Pragmatic Thinking and Learning: Refactor Your Wetware by Andy Hunt

- Software Estimation: Demystifying the Black Art by Steve McConnel

- The Passionate Programmer (My Job Went To India) by Chad Fowler

- Hackers: Heroes of the Computer Revolution

- Algorithms + Data Structures = Programs

- Writing Solid Code

- JavaScript - The Good Parts

- Getting Real by 37 Signals

- Foundations of Programming by Karl Seguin

- Computer Graphics: Principles and Practice in C (2nd Edition)

- Thinking in Java by Bruce Eckel

- The Elements of Computing Systems

- Refactoring to Patterns by Joshua Kerievsky

- Modern Operating Systems by Andrew S. Tanenbaum

- The Annotated Turing

- Things That Make Us Smart by Donald Norman

- The Timeless Way of Building by Christopher Alexander

- The Deadline: A Novel About Project Management by Tom DeMarco

- The C++ Programming Language (3rd edition) by Stroustrup

- Patterns of Enterprise Application Architecture

- Computer Systems - A Programmer's Perspective

- Agile Principles, Patterns, and Practices in C# by Robert C. Martin

- Growing Object-Oriented Software, Guided by Tests

- Framework Design Guidelines by Brad Abrams

- Object Thinking by Dr. David West

- Advanced Programming in the UNIX Environment by W. Richard Stevens

- Hackers and Painters: Big Ideas from the Computer Age

- The Soul of a New Machine by Tracy Kidder

- CLR via C# by Jeffrey Richter

- The Timeless Way of Building by Christopher Alexander

- Design Patterns in C# by Steve Metsker

- Alice in Wonderland by Lewis Carol

- Zen and the Art of Motorcycle Maintenance by Robert M. Pirsig

- About Face - The Essentials of Interaction Design

- Here Comes Everybody: The Power of Organizing Without Organizations by Clay Shirky

- The Tao of Programming

- Computational Beauty of Nature

- Writing Solid Code by Steve Maguire

- Philip and Alex's Guide to Web Publishing

- Object-Oriented Analysis and Design with Applications by Grady Booch

- Effective Java by Joshua Bloch

- Computability by N. J. Cutland

- Masterminds of Programming

- The Tao Te Ching

- The Productive Programmer

- The Art of Deception by Kevin Mitnick

- The Career Programmer: Guerilla Tactics for an Imperfect World by Christopher Duncan

- Paradigms of Artificial Intelligence Programming: Case studies in Common Lisp

- Masters of Doom

- Pragmatic Unit Testing in C# with NUnit by Andy Hunt and Dave Thomas with Matt Hargett

- How To Solve It by George Polya

- The Alchemist by Paulo Coelho

- Smalltalk-80: The Language and its Implementation

- Writing Secure Code (2nd Edition) by Michael Howard

- Introduction to Functional Programming by Philip Wadler and Richard Bird

- No Bugs! by David Thielen

- Rework by Jason Freid and DHH

- JUnit in Action

#BlackOwned #BlackEntrepreneurs #BlackBuniness #AWSCertified #AWSCloudPractitioner #AWSCertification #AWSCLFC02 #CloudComputing #AWSStudyGuide #AWSTraining #AWSCareer #AWSExamPrep #AWSCommunity #AWSEducation #AWSBasics #AWSCertified #AWSMachineLearning #AWSCertification #AWSSpecialty #MachineLearning #AWSStudyGuide #CloudComputing #DataScience #AWSCertified #AWSSolutionsArchitect #AWSArchitectAssociate #AWSCertification #AWSStudyGuide #CloudComputing #AWSArchitecture #AWSTraining #AWSCareer #AWSExamPrep #AWSCommunity #AWSEducation #AzureFundamentals #AZ900 #MicrosoftAzure #ITCertification #CertificationPrep #StudyMaterials #TechLearning #MicrosoftCertified #AzureCertification #TechBooks

Top 1000 Canada Quiz and trivia: CANADA CITIZENSHIP TEST- HISTORY - GEOGRAPHY - GOVERNMENT- CULTURE - PEOPLE - LANGUAGES - TRAVEL - WILDLIFE - HOCKEY - TOURISM - SCENERIES - ARTS - DATA VISUALIZATION

Top 1000 Africa Quiz and trivia: HISTORY - GEOGRAPHY - WILDLIFE - CULTURE - PEOPLE - LANGUAGES - TRAVEL - TOURISM - SCENERIES - ARTS - DATA VISUALIZATION

Exploring the Pros and Cons of Visiting All Provinces and Territories in Canada.

Exploring the Advantages and Disadvantages of Visiting All 50 States in the USA

Health Health, a science-based community to discuss health news and the coronavirus (COVID-19) pandemic

- The pull-out method: Why this common contraceptive fails to deliverby /u/Kampala_Dispatch on July 26, 2024 at 7:51 pm

submitted by /u/Kampala_Dispatch [link] [comments]

- Health Canada data reveals surprising number of adverse cannabis reactions (spoiler: it's small)by /u/carajuana_readit on July 26, 2024 at 5:49 pm

submitted by /u/carajuana_readit [link] [comments]

- Online portals deliver scary health news before doctors can weigh inby /u/washingtonpost on July 26, 2024 at 4:37 pm

submitted by /u/washingtonpost [link] [comments]

- Vaccine 'sharply cuts risk of dementia' new study findsby /u/SubstantialSnow7114 on July 26, 2024 at 1:53 pm

submitted by /u/SubstantialSnow7114 [link] [comments]

- Calls to limit sexual partners as mpox makes a resurgence in Australiaby /u/boppinmule on July 26, 2024 at 12:31 pm

submitted by /u/boppinmule [link] [comments]

Today I Learned (TIL) You learn something new every day; what did you learn today? Submit interesting and specific facts about something that you just found out here.

- TIL that in Thailand, if your spouse cheats on you, you can legally sue their lover for damages and can receive up to 5,000,000 THB ($140,000 USD) or more under Section 1523 of the Thai Civil and Commercial Codeby /u/Mavrokordato on July 26, 2024 at 6:57 pm

submitted by /u/Mavrokordato [link] [comments]

- TIL that with a population of 170 million people, Bangladesh is the most populous country to have never won a medal at the Olympic Games.by /u/Blackraven2007 on July 26, 2024 at 6:49 pm

submitted by /u/Blackraven2007 [link] [comments]

- TIL a psychologist got himself admitted to a mental hospital by claiming he heard the words "empty", "hollow" and "thud" in his head. Then, it took him two months to convince them he was sane, after agreeing he was insane and accepting medication.by /u/Hadeverse-050 on July 26, 2024 at 6:44 pm

submitted by /u/Hadeverse-050 [link] [comments]

- TIL Senator John Edwards of NC, USA cheated on his wife and had a child with another woman. He tried to deny it but eventually caved and admitted his mistake. He used campaign funds and was indicted by a grand jury. His life story inspired the show "The Good Wife" by Robert & Michelle Kingby /u/AdvisorPast637 on July 26, 2024 at 6:09 pm

submitted by /u/AdvisorPast637 [link] [comments]

- TIL Zhang Shuhong was a Chinese businessman who committed suicide after toys made at his factory for Fisher-Price (a division of Mattel) were found to contain lead paintby /u/Hopeful-Candle-4884 on July 26, 2024 at 4:43 pm

submitted by /u/Hopeful-Candle-4884 [link] [comments]

Reddit Science This community is a place to share and discuss new scientific research. Read about the latest advances in astronomy, biology, medicine, physics, social science, and more. Find and submit new publications and popular science coverage of current research.

- Human decision makers who possess the authority to override ML predictions may impede the self-correction of discriminatory models and even induce initially unbiased models to become discriminatory with timeby /u/f1u82ypd on July 26, 2024 at 6:29 pm

submitted by /u/f1u82ypd [link] [comments]

- Study uses Game of Thrones (GOT) to advance understanding of face blindness: Psychologists have used the TV series GOT to understand how the brain enables us to recognise faces. Their findings provide new insights into prosopagnosia or face blindness, a condition that impairs facial recognition.by /u/AnnaMouse247 on July 26, 2024 at 5:14 pm

submitted by /u/AnnaMouse247 [link] [comments]

- Specific genes may be related to the trajectory of recovery for stroke survivors, study finds. Researchers say genetic variants were strongly associated with depression, PTSD and cognitive health outcomes. Findings may provide useful insights for developing targeted therapies.by /u/AnnaMouse247 on July 26, 2024 at 5:08 pm

submitted by /u/AnnaMouse247 [link] [comments]

- New experimental drug shows promise in clearing HIV from brain: originally developed to treat cancer, study finds that by targeting infected cells in the brain, drug may clear virus from hidden areas that have been a major challenge in HIV treatment.by /u/AnnaMouse247 on July 26, 2024 at 4:57 pm

submitted by /u/AnnaMouse247 [link] [comments]

- Rapid diagnosis sepsis tests could decrease result wait times from days to hours, researchers report in Natureby /u/Science_News on July 26, 2024 at 3:50 pm

submitted by /u/Science_News [link] [comments]

Reddit Sports Sports News and Highlights from the NFL, NBA, NHL, MLB, MLS, and leagues around the world.

- Charles Barkley leaves door open to post-TNT job optionsby /u/PrincessBananas85 on July 26, 2024 at 8:47 pm

submitted by /u/PrincessBananas85 [link] [comments]

- Report: Nuggets sign Westbrook to 2-year, $6.8M dealby /u/Oldtimer_2 on July 26, 2024 at 8:13 pm

submitted by /u/Oldtimer_2 [link] [comments]

- Dolphins signing Tua to 4-year, $212.4M extensionby /u/Oldtimer_2 on July 26, 2024 at 8:09 pm

submitted by /u/Oldtimer_2 [link] [comments]

- Rams cornerback Derion Kendrick suffers season-ending torn ACLby /u/Oldtimer_2 on July 26, 2024 at 8:06 pm

submitted by /u/Oldtimer_2 [link] [comments]

- Hosting the Olympics has become financially untenable, economists sayby /u/toaster_strudel_ on July 26, 2024 at 7:34 pm

submitted by /u/toaster_strudel_ [link] [comments]

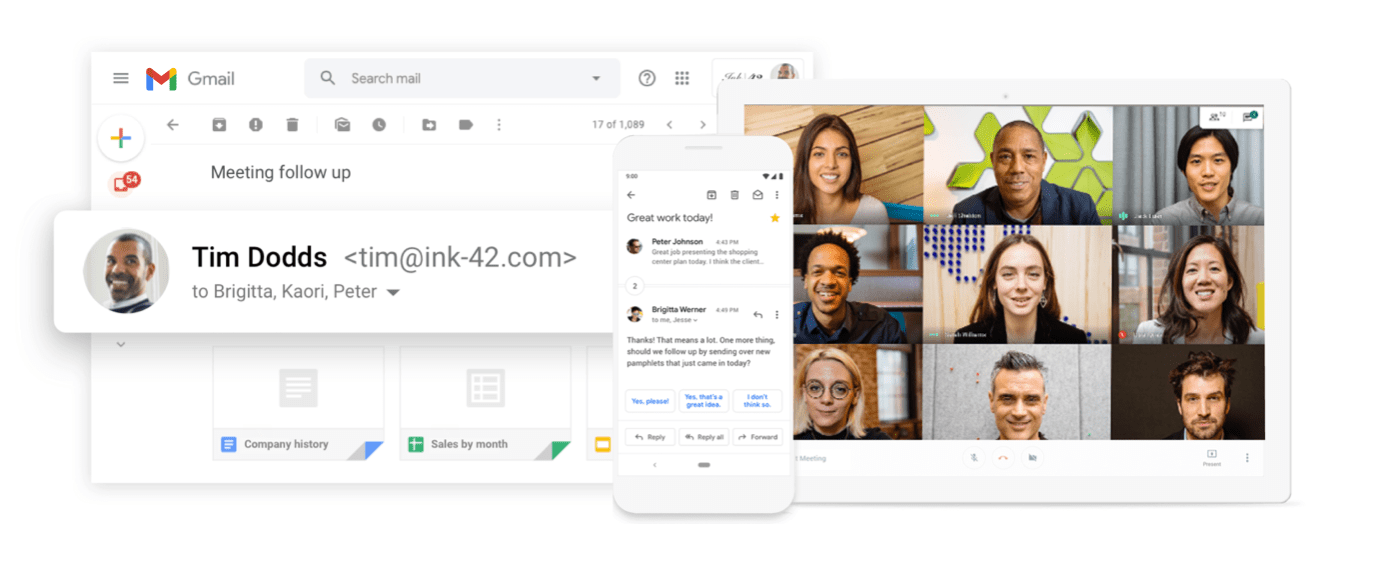

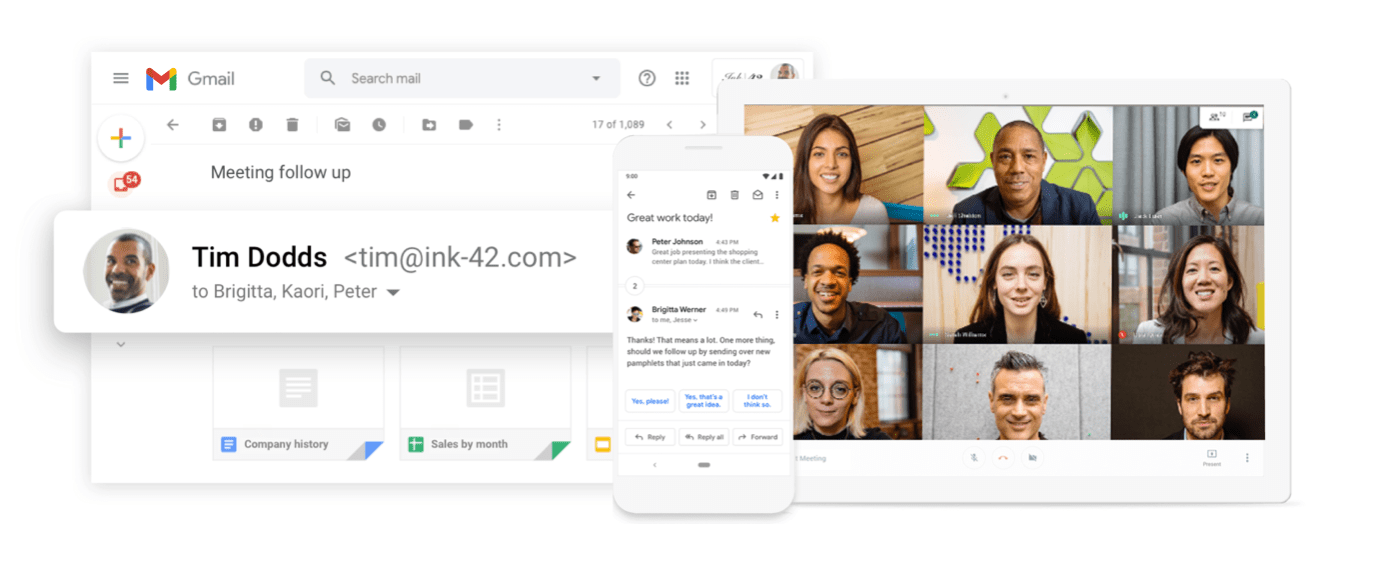

Turn your dream into reality with Google Workspace: It’s free for the first 14 days.

Get 20% off Google Google Workspace (Google Meet) Standard Plan with the following codes:

96DRHDRA9J7GTN6

96DRHDRA9J7GTN6

63F733CLLY7R7MM

63F7D7CPD9XXUVT

63FLKQHWV3AEEE6

63JGLWWK36CP7WM

63KKR9EULQRR7VE

63KNY4N7VHCUA9R

63LDXXFYU6VXDG9

63MGNRCKXURAYWC

63NGNDVVXJP4N99

63P4G3ELRPADKQU

With Google Workspace, Get custom email @yourcompany, Work from anywhere; Easily scale up or down

Google gives you the tools you need to run your business like a pro. Set up custom email, share files securely online, video chat from any device, and more.

Google Workspace provides a platform, a common ground, for all our internal teams and operations to collaboratively support our primary business goal, which is to deliver quality information to our readers quickly.

Get 20% off Google Workspace (Google Meet) Business Plan (AMERICAS): M9HNXHX3WC9H7YE

C37HCAQRVR7JTFK

C3AE76E7WATCTL9

C3C3RGUF9VW6LXE

C3D9LD4L736CALC

C3EQXV674DQ6PXP

C3G9M3JEHXM3XC7

C3GGR3H4TRHUD7L

C3LVUVC3LHKUEQK

C3PVGM4CHHPMWLE

C3QHQ763LWGTW4C

Even if you’re small, you want people to see you as a professional business. If you’re still growing, you need the building blocks to get you where you want to be. I’ve learned so much about business through Google Workspace—I can’t imagine working without it. (Email us for more codes)