Emerging AI Innovations: Top Trends Shaping the Landscape in September 2023.

In the dynamic landscape of Artificial Intelligence, September 2023 stands as a testament to the relentless pace of innovation. Titans of the industry such as Google, OpenAI, and Meta are at the forefront, unveiling cutting-edge developments with each passing day. The remarkable feats achieved by models like GPT are now being complemented by advancements in Lifelong Learning Machine Systems (LLMS). As we delve deeper into the realms of Generative AI and Discriminative AI, we are ushered into an era where machines don’t just compute—they conceptualize. Moreover, the rise of Explainable AI emphasizes the industry’s dedication to clarity and understanding, ensuring that as we integrate these AI systems more deeply into our daily lives, we remain fully informed about their decision-making processes. Join us as we navigate through the pivotal AI trends shaping September 2023 and discover how these technological wonders are transforming the world we live in.

Amplify Your Brand’s Exposure with the AI Unraveled Podcast – Elevate Your Sales Today! Get your company/Product Featured in our AI Unraveled podcast here and spread the word to hundreds of thousands of AI enthusiasts around the world.

Simplify Content Creation and Management with Notice

- Looking for a no-code tool to easily create and publish content? With Notice, generate custom FAQs, blogs, and wikis tailored to your business with AI in a single click.

- Create, manage, and translate – all in one place. Collaborate with your team, and publish content across platforms, including CMS, HTML, or hosted versions.

- Plus, you can enjoy cookie-free analytics to gain insights about users and enhance SEO with Notice‘s smart blocks. Use code DIDYOUNOTICE30SPECIAL for a 30% discount on any subscription.

TRY IT & ENJOY 30% OFF at https://notice.studio/?via=etienne

AI Monthly Rundown September 2023: The Future of LLMs in Search!

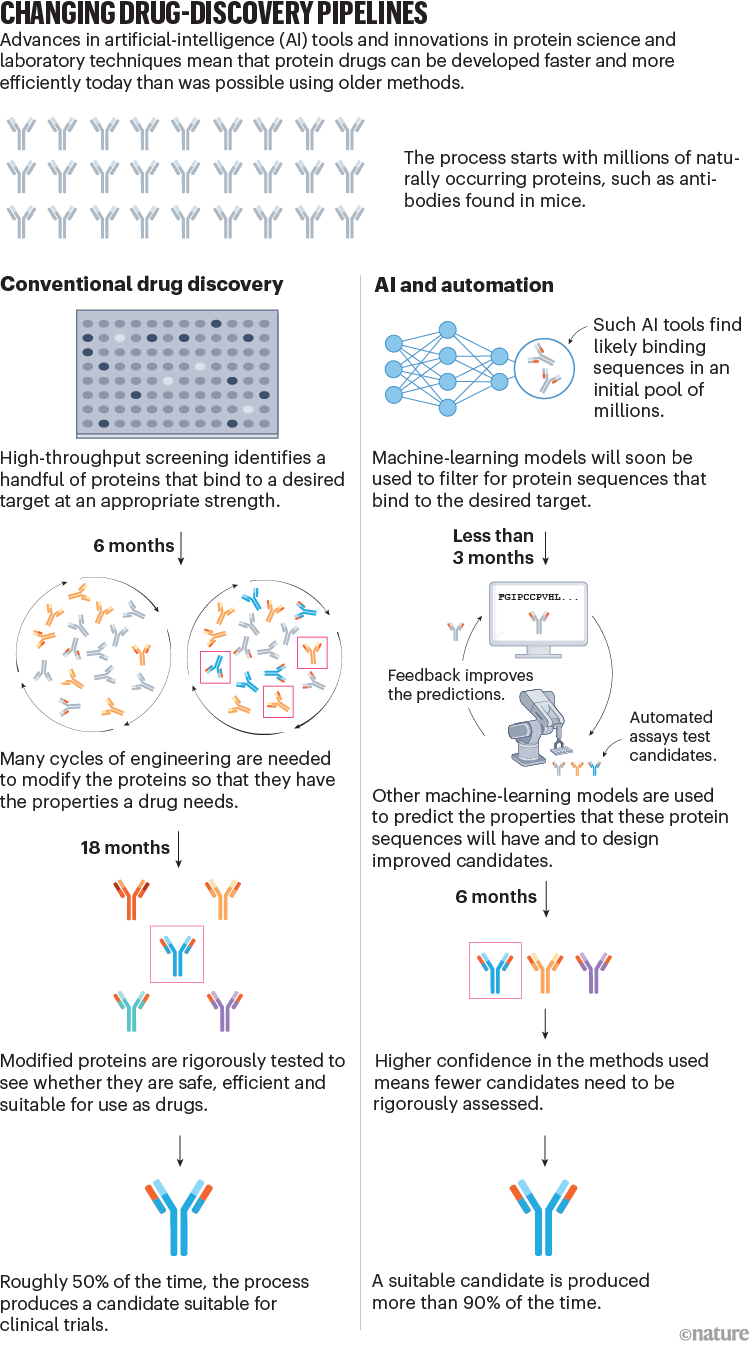

In this blog, we’ll cover the evolution of search and large language models, Amazon’s investment in Anthropic and generative AI updates, Google’s advancements in personalized route suggestions and language modeling, DeepMind’s AlphaMissense system for predicting genetic diseases, OpenAI’s DALL·E 3 and the addition of voice and image capabilities to ChatGPT, Getty Images’ Generative AI art tool, Vectara’s Boomerang LLM, important AI contributions from Google and Apple, and the surge in hiring for Generative AI talent.

LLMs, or Large Language Models, have undoubtedly made a significant impact on the way we search for information. However, it’s unlikely that they will completely replace traditional search engines. Instead, I believe that LLMs and search engines will evolve and blend together to offer users the best of both worlds.

Search engines have been around for over 29 years and have become incredibly proficient at providing fresh, relevant, and comprehensive results. This level of expertise is the result of years of industrial research. On the other hand, LLMs, with their phenomenal language understanding and generation capabilities, offer a new dimension to information retrieval. However, they sometimes lack verifiability, attributions, and relevancy.

In my view, we can expect the rise of Language Search Models (LSMs), where the strengths of both LLMs and traditional search engines will be combined. This hybrid approach will leverage the comprehensiveness and freshness of search engines while harnessing LLMs’ advanced language processing abilities. By incorporating the best of both technologies, we can enhance the search experience and deliver even more relevant and accurate results to users.

References:

[1] The Anatomy of a Large-Scale Hypertextual Web Search Engine, 1998, Google

[2] Attention Is All You Need, 2017, Google

Guess what? Amazon has just announced a whopping $4 billion investment in Anthropic! This investment is part of their larger plan to collaborate and create top-notch foundation models for the industry.

Advertise with us - Post Your Good Content Here

We are ranked in the Top 20 on Google

AI Dashboard is available on the Web, Apple, Google, and Microsoft, PRO version

Now, let me fill you in on the details. Anthropic is known for their cutting-edge safety research and products. And with Amazon Web Services (AWS) on board, they’re going to take their game to a whole new level. AWS, being experts in running secure and reliable infrastructure, will make Anthropic’s AI accessible to a wider audience.

But that’s not all! AWS will become Anthropic’s go-to cloud provider for mission-critical workloads. So, any heavy-duty tasks will rely on AWS’s powerful capabilities. Plus, this collaboration will further boost Anthropic’s support for Amazon Bedrock.

With Amazon’s massive investment, Anthropic and AWS are primed to create some seriously impressive technological advancements. And as a result, we can expect safer and more controllable AI systems. Talk about a win-win situation!

Get ready for some exciting developments in the world of artificial intelligence, my friend. This collaboration might just be a game-changer.

Hey there! Guess what? Google and DeepMind have come up with something super cool for Google Maps! They’ve developed this amazing AI algorithm that makes your route suggestions even more personalized. Can you imagine that?

This algorithm is no joke, it has a whopping 360 million parameters! It’s able to take into account real driving data from Maps users to figure out what factors are important to you when you’re making decisions about your route. It considers things like travel time, tolls, road conditions, and even your personal preferences. It’s like having your own personal navigator right in your pocket!

Now, you might be curious how this algorithm actually works. Well, it uses something called Inverse Reinforcement Learning (IRL) to learn from your behavior. And it also uses another fancy technique called Receding Horizon Inverse Planning (RHIP) for both short- and long-distance travel.

And the results are impressive! Tests have shown that the accuracy of suggested routes for two-wheelers has improved by a whooping 16 to 24 percent with the implementation of RHIP. And you know what’s even cooler? The algorithm is constantly learning and evolving, so it’s only going to get better at predicting the routes you prefer over time.

So, next time you’re hitting the road, keep an eye out for those hyper-personalized route suggestions powered by Google’s awesome AI algorithm. Happy travels!

So, there’s this fascinating survey that dives deep into the world of LLM-based agents. It’s like a one-stop shop for understanding these agents and how they can be a force for good. From the philosophical roots of agents to their development in AI, this survey covers it all.

But wait, what exactly are LLM-based agents, you ask? Well, they’re the perfect foundation for AI agents. And this survey presents a conceptual framework that can be customized for various applications. Talk about flexibility!

But that’s not all. This survey takes us on a journey through the extensive applications of LLM-based agents. We explore single-agent scenarios, multi-agent scenarios, and even how these agents can collaborate with humans. It’s all about teamwork, right?

And here’s the exciting part: we get to delve into agent societies. We’re talking about understanding the behavior and personality of LLM-based agents, the social phenomena that emerge when they form societies, and the valuable insights they bring to our own human society. It’s like a sneak peek into a future where AI agents and humans coexist.

To top it all off, this survey discusses key topics and challenges within the field. So, if you’re curious about the potential and rise of LLM-based agents, this survey is your go-to resource. It even paints a vivid picture of a society where humans and AI agents live harmoniously together. Incredible, right?

There’s a groundbreaking development in the world of 3D printing, and it involves the power of artificial intelligence. MIT researchers have come up with a tool called Style2Fab, which gives designers the ability to personalize their 3D models like never before.

Traditionally, when adding custom design elements to a 3D model, there has always been a risk of compromising the functionality of the final object. But with Style2Fab, that’s no longer an issue. This innovative tool uses generative AI to ensure that any design changes made still maintain the object’s intended functionality.

So how does it work? Well, it’s incredibly user-friendly. Designers simply need to provide natural language prompts describing the desired design elements they want to add. No need for complex technical skills or software expertise. Once the prompts are given, the AI takes over and generates the necessary modifications to the 3D model.

Once the personalized design is ready, it’s time to bring it to life with a 3D printer. The user can simply fabricate the object and voila! You have a custom-made, functional 3D-printed object that perfectly matches your vision.

This technology opens up endless possibilities for customization and personalization in 3D printing. With Style2Fab, designers can unleash their creativity without any limitations. So get ready to transform your ideas into tangible objects with the power of AI and 3D printing.

Google has come out with an even better version of Bard, and it’s got some exciting new features. Let me tell you about them.

First up, we’ve got Bard Extensions in English. This means that Bard can now dig into all the Google tools you use every day, like Gmail, Docs, Drive, Maps, YouTube, and even Google Flights and hotels. So, if you’re searching for something and the information is spread across multiple apps and services, Bard will be able to find and show you exactly what you need.

Next, we’ve got a little something called “Google it.” Now, when you’re browsing and Bard gives you an answer, you can easily double-check its accuracy. Just click on the “G” icon and Bard will read out the response for you. It’ll even go a step further and evaluate whether there’s content across the web to back up that answer.

Another cool feature is shared conversations. If someone sends you a Bard chat through a public link, you can jump right in and keep the conversation going. You can ask more questions, explore new ideas, or simply use it as a starting point for further discussions. It’s a great way to collaborate and brainstorm with others.

And lastly, Google has expanded access to existing English language features. This means that more languages now have access to cool features like uploading images with Lens, getting Search images in responses, and even modifying Bard’s responses.

These awesome updates were made possible thanks to the PaLM 2 model. Google is really pushing the boundaries with Bard, and we’re excited to see how it continues to evolve and make our lives easier.

Intel has some exciting news for AI enthusiasts. Their new chip, set to launch in December, will allow you to run generative AI chatbots directly on your laptop, without relying on the computing power of cloud data centers. How cool is that?

This breakthrough has been made possible thanks to Intel’s upcoming “Meteor Lake” laptop chip, which includes innovative AI data-crunching features. And that’s not all. Intel is also releasing new software tools to support this capability, ensuring a seamless user experience.

But wait, there’s more. During a demonstration, Intel showed off laptops that could not only generate a song in the style of Taylor Swift but also answer questions in a conversational manner, all without needing an internet connection. Talk about flexibility and convenience!

And it doesn’t stop there. If you’re a fan of Microsoft’s Copilot AI assistant, you’ll be thrilled to know that it will be compatible with Intel-based PCs. This means you can enjoy the power and convenience of Copilot right on your own laptop.

With Intel’s latest innovations, the world of AI is becoming more accessible and versatile than ever before. Get ready to unleash the potential of AI right from the comfort of your own laptop. Exciting times lie ahead!

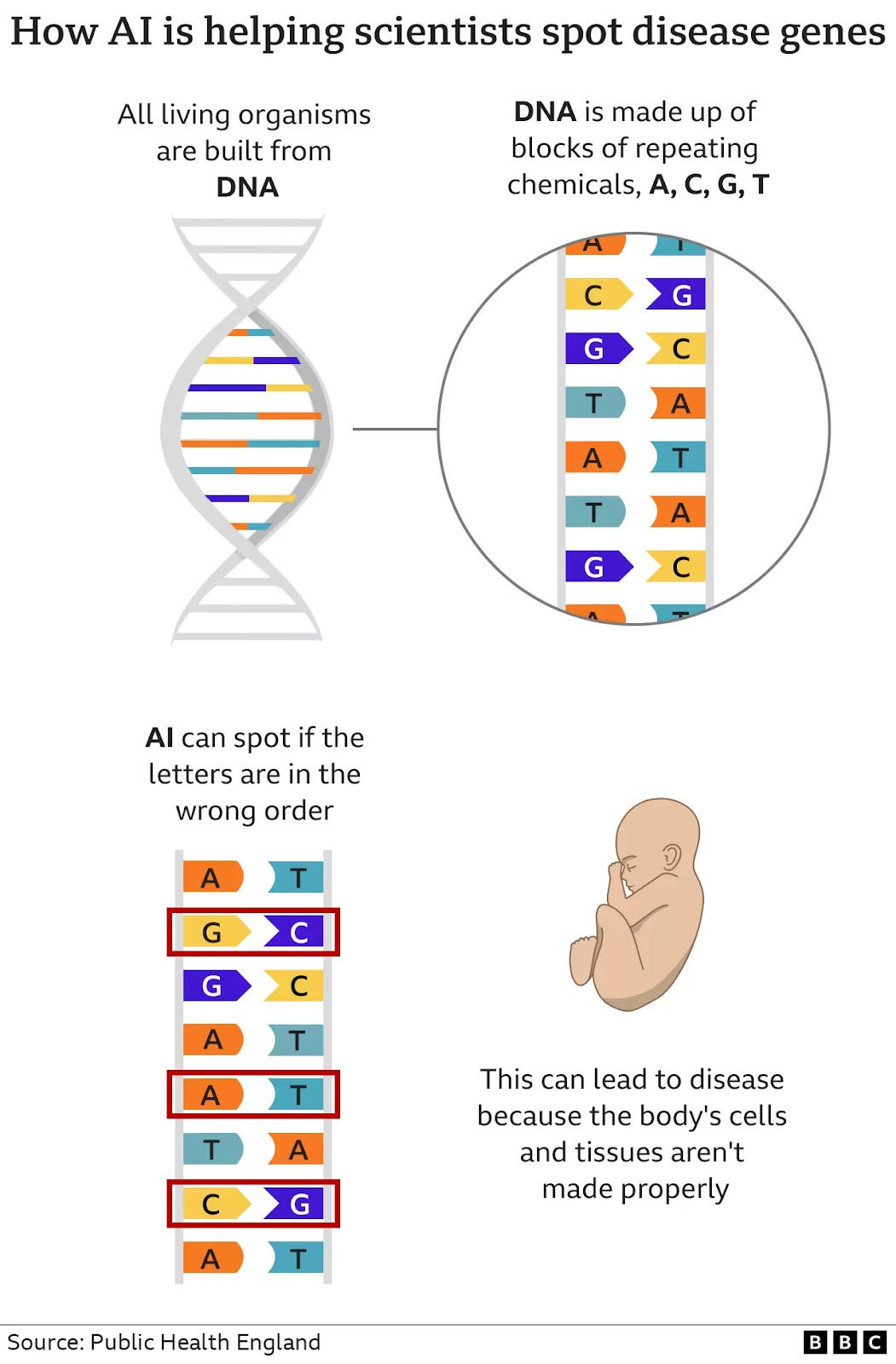

So, check this out. DeepMind, the artificial intelligence wunderkind from Google, has come up with a pretty amazing system called AlphaMissense. And get this—it’s designed to predict genetic diseases! How cool is that?

So, here’s how it works. This AI system looks at the letters in our DNA and figures out if they’ll produce the correct shape. If not, it flags them as potentially disease-causing. It’s like having a super-smart DNA inspector on the case!

Now, here’s the thing. Up until now, genetic disease hunters didn’t have a whole lot of knowledge about which parts of our DNA could lead to diseases. They had to sift through billions of chemical building blocks to find any clues. Talk about a needle in a haystack!

But with AlphaMissense, things are changing. DeepMind’s system has been able to classify a whopping 89% of the letter changes, or mutations, as either benign or disease-causing. That’s a huge leap from the mere 0.1% they could classify before. This is a game-changer!

Imagine the possibilities. This AI breakthrough could revolutionize the field of genetics and help researchers better understand and develop treatments for genetic diseases. Who knows what other mysteries we’ll uncover with the help of DeepMind’s brilliant algorithms? It’s an exciting time to be alive!

OpenAI recently introduced their latest text-to-image model, DALL·E 3, that has the ability to transform detailed requests into accurate and highly precise images. This new model is native to ChatGPT, allowing users to utilize ChatGPT to create tailored and specific prompts for DALL·E 3. And the best part is, if the generated image isn’t exactly what you envisioned, you can easily ask ChatGPT to make adjustments.

Comparing DALL·E 3 to its predecessor, DALL·E 2, the improvements are quite remarkable. With the same prompt, DALL·E 3 consistently produces significantly better results, as demonstrated in a visual comparison of an expressive oil painting of a basketball player dunking, depicted as an explosion of a nebula.

OpenAI has made it a priority to ensure that DALL·E 3 doesn’t generate images with violent, adult, or hateful content. They’ve implemented measures to prevent the model from creating such objectionable outputs. Additionally, DALL·E 3 has been designed to decline requests for images in the style of living artists. Creators also have the option to exclude their images from being used in the training of OpenAI’s future image generation models.

Currently, DALL·E 3 is in the research preview stage and will be made available to ChatGPT Plus and Enterprise customers in October through the API. Later this fall, it will also be introduced in Labs. So, exciting things are coming up for those who are eager to dive into the world of text-to-image generation with DALL·E 3!

Amazon recently revealed some exciting news at its annual devices event. They are introducing generative AI to both Alexa and Fire TV, offering enhanced experiences for users.

One notable update is the implementation of a new generative AI model within the Echo family of devices. This model has been specifically designed to optimize voice interactions. It delves deeper into conversational experiences by considering not just the spoken words, but also factors like body language, eye contact, and gestures. This holistic approach is set to make interactions with Alexa even more powerful and intuitive.

In addition to the Echo devices, Amazon is also bringing generative AI updates to Fire TV’s voice search functionality. This means that interacting with Alexa on Fire TV will become more conversational, allowing users to discover new content based on specific preferences and requests.

These AI updates pave the way for more natural and seamless interactions with Amazon’s smart devices. By incorporating body language and context, Alexa will be able to better understand user needs and preferences, leading to a more personalized and intuitive user experience.

With these generative AI advancements, Amazon is taking a significant step towards integrating AI technology into our everyday lives, making our interactions with Alexa and Fire TV more conversational, engaging, and enjoyable.

So, we’ve been hearing a lot about these self-supervised language models (LLMs), right? Well, turns out they’re not just impressive at predictive tasks, they also have some serious compression skills!

In this pretty interesting research, DeepMind and Meta, both part of the big G, decided to delve into the compression abilities of LLMs. They wanted to understand the relationship between compression and prediction. And guess what? They found out that these foundation models, which are primarily trained on text, are like the Jack-of-all-trades when it comes to compression.

You see, these LLMs have this amazing ability to learn within the context of the text they’re fed. And this in-context learning superpower makes them pretty effective at compressing all sorts of things. Take for example Chinchilla 70B (cute name, by the way), it achieves compression rates of 43.4% on ImageNet patches and 16.4% on LibriSpeech samples. And those numbers are even better than what you’d get with domain-specific compressors like PNG or FLAC.

So, what’s the big takeaway here? Well, next time you need something compressed, maybe you should consider calling up one of these LLMs. They might just surprise you with their incredible compression skills!

So, get this—Meta, you know, the company behind all those fancy virtual reality headsets and whatnot, has some pretty cool plans up their sleeves. They’re actually working on creating a whole bunch of chatbots that are specifically designed to connect with younger users. And get this—they’re not just your average run-of-the-mill bots either. Oh no, these chatbots are gonna have personalities. Yeah, you heard me right, personas!

The idea behind it is to make chatting with these bots a lot more exciting and engaging for the young crowd. And honestly, who doesn’t love a little extra color and sass, right? I mean, sure, you’ll be able to chat with them about all sorts of stuff, but these bots are gonna have some serious attitude.

But it doesn’t stop there! Meta’s got big plans for their chatbot army. They’re not only creating personalities for the young ones, but they’re even putting together some bots for celebrities. That means you might actually get to have a little tête-à-tête with your favorite star! How cool is that?

And last but not least, Meta’s also developing productivity-focused chatbots. You know, the kind that’ll help you with coding, managing tasks, and basically being all-around handy. So, whether you’re looking for some entertainment or a helping hand, it sounds like Meta’s got you covered with their upcoming sassy chatbots. Exciting times ahead, my friends!

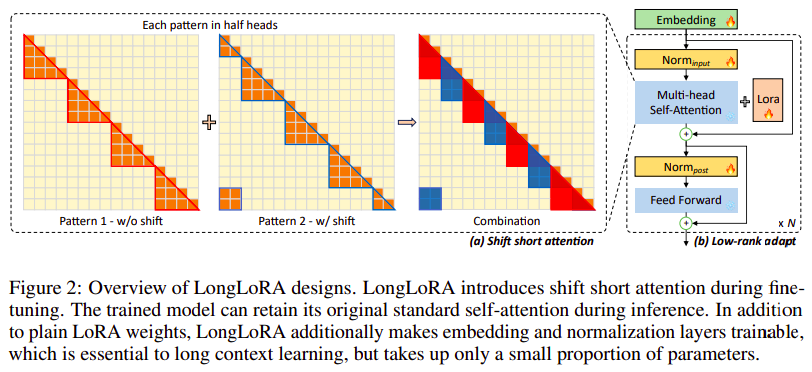

Have you ever wished you could extend the context sizes of your pre-trained LLMs without spending a fortune on computation costs? Well, new research has come to the rescue with LongLoRA, an ultra-efficient fine-tuning method. This method allows you to increase the context length of your LLMs without the need for an abundance of time or powerful GPU resources.

Normally, training LLMs with longer context sizes can be a time-consuming and resource-heavy process. Just imagine, extending the context length from 2048 to 8192 could increase computational costs by a whopping 16 times! This is where LongLoRA steps in to save the day. It achieves efficiency in two key ways:

Firstly, it utilizes sparse local attention instead of dense global attention, which is optional during inference time. This clever technique helps to significantly reduce computational requirements.

Secondly, LongLoRA incorporates LoRA (Low-Rank Adaptation) for context extension. By leveraging LoRA, the method achieves remarkable performance while keeping the original model architectures intact.

The results speak for themselves. LongLoRA has demonstrated impressive performance across a range of tasks using LLaMA-2 models, from 7B/13B to 70B. Notably, it was able to extend LLaMA-2 7B from a 4k context to an impressive 100k, and LLaMA-2 70B to 32k – all on a single 8x A100 machine. This makes LongLoRA both easy to use and incredibly practical.

So, if you’re looking to maximize the capabilities of your LLMs without breaking the bank, LongLoRA might just be the solution you’ve been waiting for.

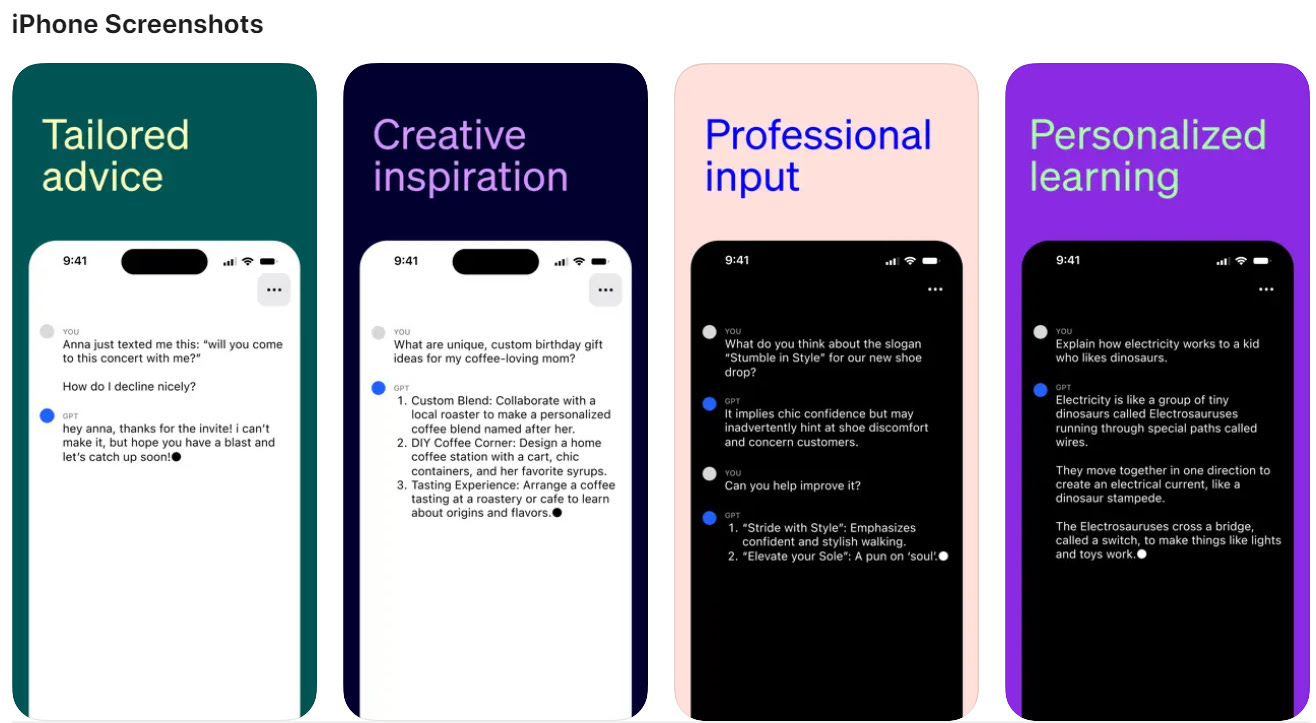

Exciting news! OpenAI is taking conversational AI to a whole new level with its latest update to ChatGPT. They’re introducing voice and image capabilities, making it possible for users to have voice conversations and show images to ChatGPT. This means that interacting with ChatGPT just got a whole lot more intuitive and versatile.

With this new feature, users can now engage in live conversations about landmarks. Imagine being able to ask ChatGPT about a famous monument and getting all the information you need just by describing it to the model. Additionally, if you’re stuck on what to cook for dinner, you can simply show pictures of the ingredients in your fridge to ChatGPT and receive recipe suggestions. How convenient is that?

But it doesn’t stop there! OpenAI is also allowing users to share photos to receive math problem hints. So, if you’re struggling with a tricky equation, just snap a quick picture and let ChatGPT assist you. This capability will surely come in handy for students and anyone who loves a bit of mathematical challenge.

The voice and image capabilities will be gradually rolled out to Plus and Enterprise users over the next couple of weeks. Voice will be available on both iOS and Android, while images will be supported on all platforms.

What’s more, ChatGPT can now understand and reason about images, including photos, screenshots, and even text-containing documents. And if you need to discuss multiple images, they’ve got you covered. OpenAI has also introduced a new drawing tool that can help guide you.

Get ready to experience ChatGPT like never before. The era of voice and image conversations has arrived!

Getty Images has recently introduced a cool new AI art tool called Generative AI. What it does is pretty remarkable – it uses an AI model provided by NVIDIA to transform text descriptions into actual images. Isn’t that mind-blowing?

But here’s the interesting part: Getty Images has made sure that this tool is not only technologically advanced but also “commercially safer” than other similar solutions out there. They have put in some measures to prevent disinformation and copyright infringement. That’s definitely a smart move considering how important it is to protect copyrights and avoid spreading false information.

Getty Images is also being fair to the talented artists out there. They will compensate the contributors whose work is used to train the AI generator and even share the revenues generated from the tool. Now that’s a great way to support the creative community!

If you’re eager to try out Generative AI, you can access it on Getty’s website. And hey, it’s not just limited to their website. You can also integrate the tool into apps and websites through an API. That’s pretty cool because it means more people can get to enjoy its creative possibilities.

Getty Images isn’t the only company exploring ethical approaches to generative AI. Bria and Shutterstock are also delving into this fascinating field. It’s exciting to see companies taking responsibility and making sure AI is used in a way that benefits everyone ethically and legally.

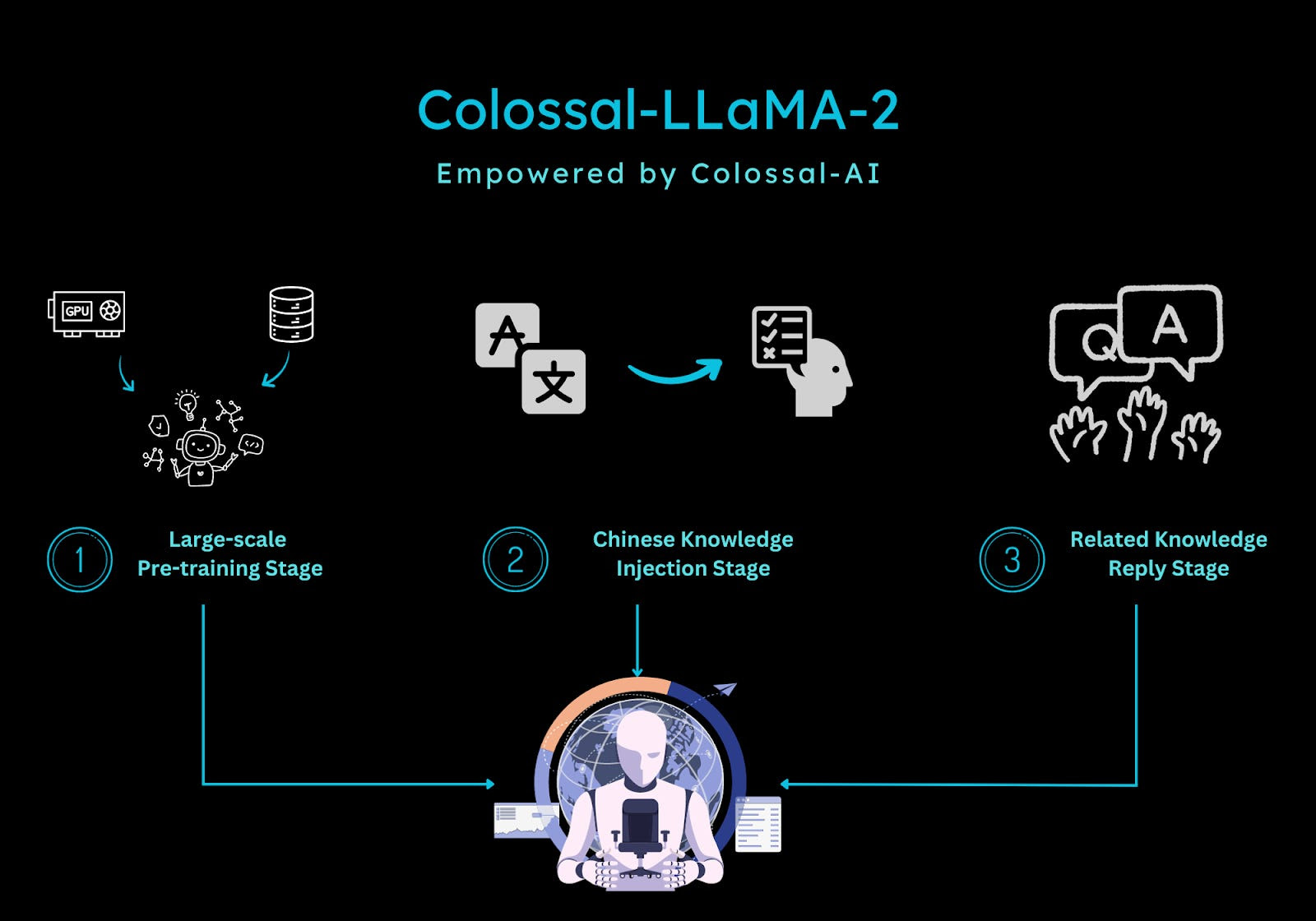

Colossal-AI recently launched an exciting new product called Colossal-LLaMA-2. This domain-specific language model solution is making waves in the industry, and for good reason. One of the standout features of Colossal-LLaMA-2 is that it is open-source and completely commercial-free. This means that users can take advantage of all its capabilities without having to worry about any hidden costs or subscriptions.

What makes Colossal-LLaMA-2 even more impressive is that it achieves outstanding results while using a relatively small amount of data and training time. This not only saves users valuable resources but also lowers costs significantly. It’s no wonder that this model has quickly gained attention and recognition.

In addition to its cost-effectiveness, the Chinese version of LLaMA-2 has proven to be a top performer in various evaluation benchmarks. Colossal-AI has made some noteworthy improvements with this release, including vocabulary expansion, a data cleaning system, and a multi-stage pre-training scheme. These enhancements aim to enhance the model’s abilities in both Chinese and English.

With Colossal-LLaMA-2, users can rely on a high-quality, commercial-free language model solution that delivers impressive results. Whether you’re working with Chinese or English, this model is sure to provide you with the accuracy and performance you need.

Hey there! Big news in the world of artificial intelligence! OpenAI, the AI research company, is apparently considering selling shares, and this could potentially skyrocket its valuation. The current estimation is that it could jump from $29 billion all the way up to a staggering $80 billion to $90 billion range! That’s a massive leap!

Moving on to another interesting tidbit, it seems like Jony Ive, the former design genius behind Apple, and Sam Altman, the CEO of OpenAI, have been having some intriguing conversations. Apparently, they’ve been discussing the possibility of creating a brand new AI hardware device. We don’t have all the details yet, but it’s exciting to ponder what kind of innovative hardware they could come up with for the AI era.

It’s always fascinating to see how AI continues to evolve and captivate the minds of brilliant individuals. We’ll definitely be keeping an eye on OpenAI and any updates regarding their potential share sale and the AI hardware device discussions between Jony Ive and Sam Altman. Who knows what revolutionary advancements lie ahead in the world of AI? It’s certainly an exciting time!

Have you heard the news? Vectara has just launched Boomerang, the latest and greatest LLM that is completely changing the game when it comes to GenAI accuracy. What’s so special about it, you ask? Well, it’s outperforming all the big-name competitors out there and is setting a whole new benchmark for Grounded Generative AI in the business world.

Boomerang is part of Vectara’s GenAI platform and is a next-gen neural information retrieval model that is taking things to the next level. But what exactly does it do better than the rest? For starters, it surpasses Cohere in terms of benchmark performance and matches OpenAI on certain metrics. But where it truly shines is in multilingual benchmarks. Boomerang has taken it upon itself to prioritize security, making sure that there is reduced bias, copyright concerns, and what they call “hallucinations” in AI-generated content.

But that’s not all! Boomerang goes above and beyond by offering cross-lingual support for hundreds of languages and dialects. It also improves prompt understanding, which ultimately leads to more accurate and faster responses. So, if you’re looking for the best of the best when it comes to GenAI accuracy, Boomerang is the way to go.

Google recently celebrated its 25th birthday and took a moment to reflect on its impressive legacy in the field of artificial intelligence. It all began back in 2001 when Google started using a machine learning algorithm to suggest improved spellings for web searches. Quite a humble beginning, but little did they know that it would pave the way for groundbreaking innovations.

One significant moment that stands out is the introduction of PaLM 2 and Gemini in 2023. These advanced models hold great promise and are expected to drive Google’s AI advancements for the next 25 years. With PaLM 2 and Gemini, Google aims to push the boundaries of what artificial intelligence can achieve.

Looking back on their 25-year journey, it’s clear that Google’s commitment to AI has been constant. From its early days of improving web search spellings to now revolutionizing various industries with cutting-edge technology, Google has demonstrated its passion for pushing the limits.

As we move into the future, there is no doubt that Google’s legacy in AI will continue to guide their innovative pursuits. With each passing year, we can anticipate even greater advancements that will shape the way we interact with technology and enhance our lives.

Google’s 25-year AI legacy sets the stage for an exciting future, as the company remains at the forefront of artificial intelligence research and development.

Have you heard about NVIDIA’s latest software called TensorRT-LLM? It’s designed to revolutionize LLM inference on H100 GPUs, delivering a whopping 8x performance boost. How impressive is that?

This software is packed with incredible features. It comes with optimized kernels, pre- and post-processing steps, and even multi-GPU/multi-node communication primitives to ensure top-notch performance. What’s really cool is that developers can experiment with new LLMs without needing deep knowledge of C++ or NVIDIA CUDA. So, it’s user-friendly and accessible to a wider range of developers.

To make things even better, TensorRT-LLM provides an open-source modular Python API, making customization and extensibility a breeze. Developers can easily tailor the software to their needs.

Now, if you thought that was it, there’s more. This software allows users to quantize models to FP8 format, which improves memory utilization. This is great news for those looking to optimize their memory usage and overall performance.

Currently, TensorRT-LLM is available in early access and will soon be integrated into the NVIDIA NeMo framework. If you’re interested, you can apply for access through the NVIDIA Developer Program, with a focus on enterprise-grade AI applications. So, keep an eye out for it!

NVIDIA is definitely pushing the boundaries of LLM deployment performance with TensorRT-LLM. It’s truly an exciting development in the world of AI.

Today, we have some exciting news from Google DeepMind. They have introduced a fascinating concept called Optimization by PROmpting, or OPRO for short. What is OPRO, you ask? Well, it’s all about using language models as optimizers.

Let me break it down for you. DeepMind’s language models are now being trained to generate new solutions based on a given problem and previously discovered solutions. So, when faced with an optimization problem, these models are able to describe it in natural language and come up with innovative solutions.

But how does it perform? The results are pretty impressive. OPRO has been applied to various tasks like linear regression, traveling salesman problems, and prompt optimization tasks. And guess what? The performance of the prompts optimized by OPRO surpassed human-designed prompts. In fact, it outperformed them by up to 8% on GSM8K and a whopping 50% on Big-Bench Hard tasks.

This breakthrough has the potential to revolutionize optimization methods. By leveraging the power of language models, DeepMind is exploring new avenues for solving complex problems more effectively. Exciting times lie ahead as language models continue to evolve and enhance our problem-solving abilities.

Meta has some ambitious plans in the works! Word on the street is that they’re gearing up to give OpenAI’s GPT-4 a run for its money. How, you ask? Well, by training their very own chatbot model that’ll give GPT-4 a serious run for its money. Meta means business.

Now, here’s the interesting part. Meta’s going all out for this venture. They’re snatching up Nvidia H100 AI-training chips, which means they won’t have to rely on Microsoft’s Azure cloud platform for training their chatbot. Smart move, if you ask me. They’re also working on expanding their data centers to beef up their chatbot’s capabilities. Talk about leveling up!

But wait, there’s more. CEO Mark Zuckerberg has big dreams for this chatbot model. He wants it to be available to companies for free so that they can create their own AI tools. Imagine the possibilities! With Meta’s model, companies can start developing AI tools that can mimic human expressions. That’s pretty darn cool.

So, keep your eye on Meta, folks. They’re going head-to-head with OpenAI, and who knows what kind of AI magic they’ll whip up next. It’s an exciting time for AI enthusiasts, that’s for sure!

Google is taking a big leap towards responsible AI development with the launch of its Digital Futures Project. They’re putting their money where their mouth is by establishing a $20 million Google.org fund, which will provide grants to top-notch think tanks and academic institutions worldwide.

The aim of this project is to empower researchers who are tackling the challenging task of developing AI responsibly. Google wants to foster a global conversation and organize events that bring together experts to discuss and debate policy solutions for responsible AI.

They’re starting off strong with some impressive names as the inaugural grantees of the Digital Futures Fund. Institutions like the Aspen Institute, Brookings Institution, Carnegie Endowment for International Peace, the Center for a New American Security, the Institute for Security and Technology, and SeedAI are among the fortunate recipients. And this is just the beginning. Google plans to support institutions from different corners of the globe.

With this initiative, Google is sending a clear message: they’re serious about making sure AI development is done the right way. By investing in research, organizing events, and supporting global experts, they’re paving the way for responsible AI that benefits society as a whole.

So, get this: Microsoft, MIT, and Google have teamed up for a groundbreaking project. They’ve taken the entire Project Gutenberg Collection and transformed it into audiobooks! Yeah, you heard that right. Now you can listen to thousands of free and open audiobooks, all thanks to some serious AI power.

But here’s the really cool part: they didn’t just stop at converting the texts into boring, monotonous readings. No, sir! They utilized the latest advancements in neural text-to-speech technology to give these audiobooks a real voice. And not just any voice, mind you. The quality of the voice acting is exceptional. It’s like having a professional narrator whispering the words in your ear.

But that’s not all. They’ve also created a system that lets you customize the audiobook experience. You can adjust the speaking speed and style to suit your preferences. Want a more emotional reading? No problem. They’ve got you covered. And get this – you can even find a voice that matches your desired sound by providing just a small sample of audio. How cool is that?

With this collaboration, the possibilities for audiobooks are truly endless. So, if you’re a bookworm who loves to listen on the go, or if you just want to immerse yourself in a great story, these AI-powered audiobooks are definitely worth checking out. Trust me, you won’t be disappointed.

Today, there is a huge demand for talent in Generative AI. It’s no surprise that the number of companies looking for “Generative AI” expertise in their job postings is soaring.

Tech giants like Amazon, Nvidia, Microsoft, and Google are leading the way in hiring GenAI talent. But they’re not alone! Big banks such as Citigroup and CapitalOne are also jumping on the GenAI bandwagon.

Interestingly, the technology sector is the top industry seeking GenAI experts, followed by finance and healthcare. Sectors like real estate, basic materials, and energy, on the other hand, haven’t been as enthusiastic about GenAI.

What’s even more intriguing is the salary companies are willing to pay for GenAI talent. Among all the technical skills and technologies being tracked, jobs mentioning “Generative AI” or “LLMs” had the highest average base salary at a whopping $200,837 per year.

Clearly, Generative AI is a hot field right now. The demand for talent is exploding, and companies are willing to pay top dollar to attract the best minds in the industry. So, if you’re interested in GenAI, there’s no better time to jump on board!

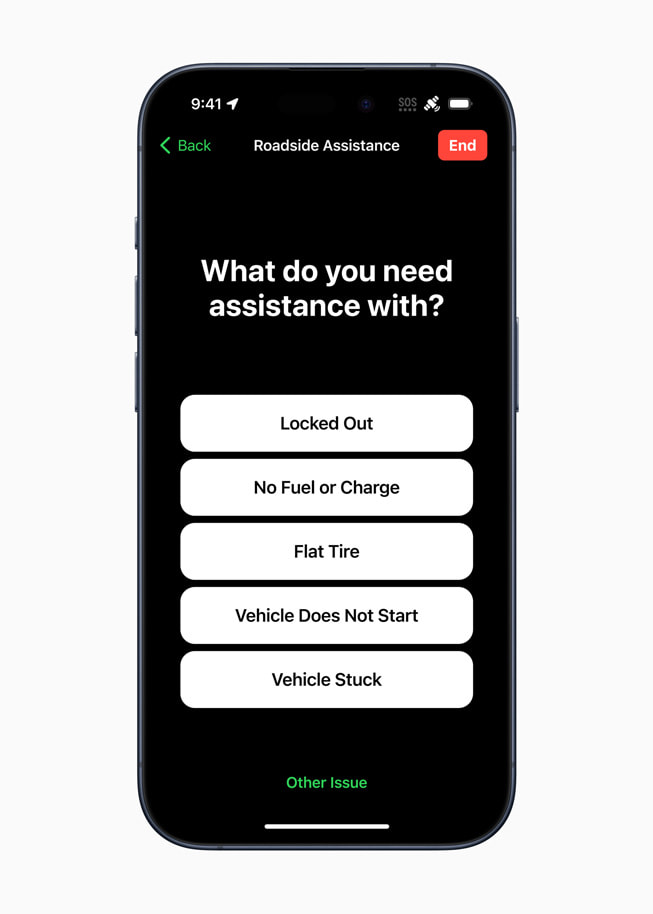

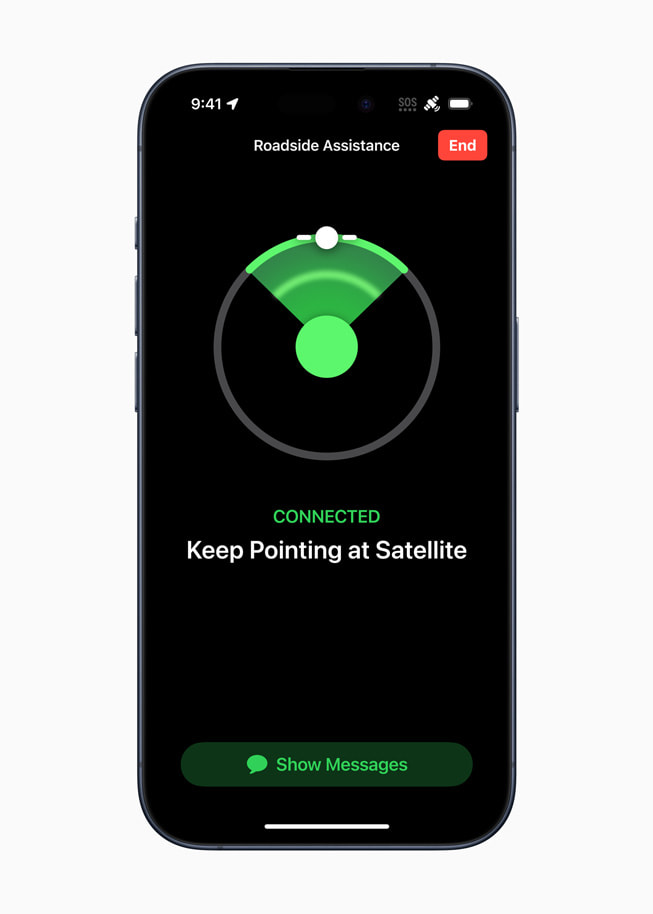

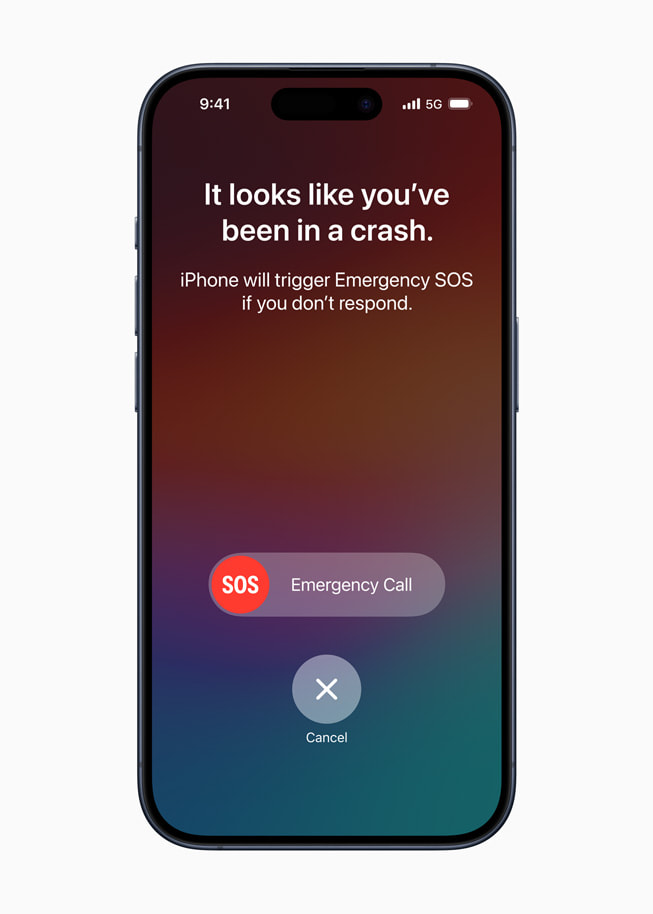

So listen up, folks! You might not have noticed, but Apple is sneaking in some AI wizardry into their snazzy new iPhones and watches. They’re slyly improving the basic functions of their gadgets using artificial intelligence. How clever!

At their developer conference, Apple didn’t shout about AI from the rooftops, but behind the scenes, their tech wizards have been weaving AI magic into their core software products. Sneaky, right?

Now, let me tell you about the cool features they’ve cooked up. First up, they’ve got these new-fangled semiconductor designs that power AI functions. With these babies, you can expect better call quality and sharper image capture on your new iPhone. Say goodbye to those pixelated photos!

But wait, there’s more! Apple’s watches are getting a boost too, thanks to their spanking new chip. It’s got a snazzy four-core “Neural Engine” that makes Siri even smarter. Rumor has it that Siri’s accuracy has shot up by a cool 25%. Impressive, right? Plus, this new chip opens up exciting new ways for you to interact with your trusty watch.

Oh, and here’s a neat trick: the new iPhone is so clever, it can automatically recognize the people in your photos. No more scrolling through hundreds of pics just to find that one shot of grandma blowing out her birthday candles. Apple’s got you covered!

So, while Apple might not be making some big fuss about AI, they’re certainly weaving their magic behind the scenes. Who knows what other tricks they’ve got up their sleeve?

Have you heard about Salesforce’s latest offering? They have introduced Einstein Copilot Studio, a tool that allows customers to customize their AI offerings. It’s a game-changer!

With the prompt builder, customers have the freedom to add their own custom prompts for their products or brands. Imagine being able to tailor the AI experience specifically to your business needs! This will undoubtedly give you an edge in the market and make your brand stand out.

But it doesn’t stop there. The skills builder feature is another exciting element of Copilot Studio. It enables companies to add actions to prompts, such as competitor analysis or objection handling. This means that you can make your AI not only smart but also incredibly useful, addressing various aspects of your business.

And if you’re wondering about the technical side of things, the model builder is here to save the day. It allows customers to bring their own models or even use supported third-party offerings. So, whether you have an existing model you want to integrate or prefer a ready-made solution, Salesforce has got you covered.

Now, I know what you might be thinking – what about biases and inappropriate responses? Well, Salesforce is one step ahead. They are actively working on a system called “the Einstein Trust Layer” to tackle those concerns. They understand the importance of fairness and ensuring that the AI delivers appropriate responses without any biases.

All in all, Salesforce’s Einstein Copilot Studio is a great tool for anyone looking to harness the power of AI in a customized and tailored way. It’s all about empowering you to create an AI experience that truly represents your brand and meets the specific needs of your business.

NExT-GPT is a groundbreaking AI system that is revolutionizing the world of human-like AI research. This multimodal language model is pretty impressive because it can comprehend and create content in different forms like text, images, videos, and audio. This means it’s not limited to just one way of understanding and generating information, but rather has a wide range of options at its disposal.

What’s even more fascinating about NExT-GPT is how it builds on existing models. By utilizing pre-trained encoders and decoders, it minimizes the need for extensive parameter tuning. This not only saves time but also enhances efficiency.

But that’s not all – NExT-GPT goes beyond mere optimization. It introduces something called modality-switching instruction tuning (MosIT). This nifty feature allows the system to seamlessly switch between different modalities, adapting to the specific requirements of the task at hand. This adaptability adds a whole new layer of intelligence to the model.

To further enhance performance, NExT-GPT also comes with a meticulously curated dataset specifically designed to tackle complex cross-modal understanding. This dataset empowers the system to comprehend intricate relationships between different modalities and generate richer, more contextually relevant content.

All in all, NExT-GPT is a remarkable innovation in AI research, redefining the boundaries of what AI can achieve in terms of human-like understanding and generation of content across multiple modalities.

Meta AI has introduced Belebele, an impressive dataset that is capable of understanding a staggering 122 languages. This exciting development allows text models to be evaluated across a wide range of languages, including those with high, medium, and low-resource availability. By expanding the language coverage of natural language understanding benchmarks, Belebele opens up new possibilities for language comprehension research.

The Belebele dataset itself is comprised of questions that are based on short passages extracted from the Flores-200 dataset. Each question offers four multiple-choice answers, designed to assess various levels of overall language comprehension. With this dataset, model performance can be directly compared across all languages. It has already been employed to evaluate both multilingual masked language models and large-scale language models.

Interestingly, the results have shown that smaller multilingual models tend to exhibit better performance in regards to comprehending multiple languages. This finding emphasizes the potential of compact yet versatile models when it comes to understanding diverse languages.

With Meta AI’s Belebele dataset, the field of language understanding and comprehension reaches new heights, enabling researchers to explore the intricacies of languages from around the world. This invaluable resource promises to drive further advancements in the development of language models and contribute to the overall progress of natural language processing technology.

Hey there! Guess what? Stability AI just came out with their 1st Japanese Vision-Language Model called Japanese InstructBLIP Alpha. It’s an absolute game-changer! This model is designed to generate textual descriptions for input images and even answer questions about them. How cool is that?

Let me fill you in on some of the details. This incredible model is actually built upon the Japanese StableLM Instruct Alpha 7B. It takes advantage of the InstructBLIP architecture. And you know what? It’s pretty darn good at what it does.

One of the things that makes this model stand out is its ability to accurately recognize Japan-specific objects. Plus, it can process text input like questions. Isn’t that amazing? It’s available on the Hugging Face Hub for inference and additional training. But here’s something important to note: it’s exclusively for research purposes.

Now, let’s talk about the applications. This nifty model can be used for so many things. It can power search engine functionality, provide scene descriptions, and even generate textual descriptions specifically for blind individuals. How awesome is that? Stability AI has really hit it out of the park with this one.

So, if you’re a researcher looking to explore the possibilities of vision-language models, this Japanese InstructBLIP Alpha is definitely something worth checking out. Go ahead, give it a spin!

So, here’s an interesting paper I came across that explores the relationship between transformers and support vector machines (SVMs). The authors establish a formal equivalence between the optimization geometry of self-attention in transformers and a hard-margin SVM problem.

What does this mean? Well, it turns out that when we optimize the attention layer of transformers, it actually converges towards an SVM solution. Specifically, this solution minimizes the nuclear norm of the combined parameter.

But that’s not all! The study goes on to prove that gradient descent also converges under certain conditions. And they even introduce a more general SVM equivalence for nonlinear prediction heads.

So what does all this mean in plain terms? It suggests that transformers can be thought of as a hierarchy of SVMs. These SVMs separate and select the most optimal tokens, allowing transformers to perform their magic.

This paper sheds some light on the inner workings of transformers, revealing that there’s a connection between transformers and SVMs. It’s fascinating to see how different machine learning techniques can be related and build upon each other.

Have you heard about Amazon’s latest breakthrough in AI-powered palm recognition? It’s called Amazon One, and it’s revolutionizing the way customers interact with everyday activities. This fast, convenient, and contactless device allows you to use the palm of your hand for various tasks, such as making payments, presenting loyalty cards, verifying your age, or even entering a venue. Yes, you heard that right – no need for your phone or wallet!

So, how does Amazon One work? It’s a combination of advanced technologies like generative AI, machine learning, cutting-edge biometrics, and optical engineering. These components come together to create a seamless and secure experience for users.

This innovative device is already making its way to over 500 Whole Foods Market stores and several other third-party locations, including travel retailers, sports and entertainment venues, convenience stores, and grocers. And it doesn’t stop there – Amazon One goes the extra mile by being able to detect fake hands and reject them, ensuring the highest level of security.

Here’s the impressive part – Amazon One has already been used over 3 million times with astounding accuracy of 99.9999%. This speaks volumes about its reliability and efficiency.

Imagine the convenience of leaving your phone and wallet at home while effortlessly completing various tasks with just the palm of your hand. Thanks to Amazon One, this futuristic concept is now a reality.

Did you know that Intel is really stepping up its game in the AI field? They’re not just focused on data center-based AI accelerators anymore. Nope, they’re expanding their reach and going after the AI opportunity in multiple ways.

According to Intel’s CEO, Pat Gelsinger, AI is going to become more accessible to everyday users. And why is that? Well, there are a few reasons. First, there are economic considerations. It’s getting cheaper and more affordable to incorporate AI technology into various products. Second, there are physical considerations. AI is moving closer to end-users because it’s no longer confined to giant data centers. And finally, there are privacy considerations. By having AI on devices, users have more control over their data.

So, how is Intel making this happen? They’re incorporating AI into their server CPUs, like the Sapphire Rapids. These CPUs have built-in AI accelerators specifically designed for inference tasks. But Intel doesn’t stop there. They’re also launching the Meteor Lake PC CPUs that have dedicated AI hardware. This means AI workloads can be accelerated directly on user devices. And with Intel’s dominant position in the CPU market, it’s a win-win situation. Software providers are more likely to support Intel’s AI hardware, making it even more appealing for everyday users like you and me.

It’s an exciting time for AI, and Intel is definitely making some bold moves to stay ahead of the game.

Hey there! If you’re itching to delve deeper into the world of artificial intelligence, I’ve got just the thing for you. There’s this super informative book out there called “AI Unraveled: Demystifying Frequently Asked Questions on Artificial Intelligence.” Trust me, it’s a gem.

What’s great about this book is that it’s all about making AI less cryptic and more accessible. If you’ve got questions about artificial intelligence swirling in your mind, this book is here to give you the answers you’re seeking. It’s like having a knowledgeable friend who’s always there to clarify things for you.

You might be wondering where you can get your hands on this literary marvel. Well, you’ve got options! This book is available at Apple, Google, and Amazon, so you can choose the platform that suits you best. Whether you love your Apple device, prefer to browse through Google Play, or are a dedicated Amazon enthusiast, you’re covered.

So, what are you waiting for? Go ahead and snatch up a copy of “AI Unraveled” today. With this book in your hands, you’ll be well on your way to unraveling the mysteries of artificial intelligence. Happy reading!

We covered a wide range of topics, including the evolution of search and language models, Amazon’s collaboration with Anthropic, Google’s personalized route suggestions in Google Maps, MIT’s Style2Fab AI tool, DeepMind’s AlphaMissense system for predicting genetic diseases, OpenAI’s DALL·E 3 text-to-image model, and Amazon’s generative AI updates for Alexa and Fire TV. We also discussed Meta’s chatbot personalities, the launch of Getty Images’ Generative AI art tool, Colossal-AI’s open-source language model, and the potential sale of shares by OpenAI. Additionally, we touched on NVIDIA’s TensorRT-LLM software, Google DeepMind’s language models as optimizers, and Apple’s integration of AI into their devices. Finally, we explored Salesforce’s Einstein platform, Meta AI’s Belebele dataset, Stability AI’s Japanese InstructBLIP Alpha model, and the expansion of AI by Intel. Don’t forget to check out “AI Unraveled,” a book that answers frequently asked questions about AI. Join us next time on AI Unraveled as we continue to demystify frequently asked questions on artificial intelligence and bring you the latest trends in AI, including ChatGPT advancements and the exciting collaboration between Google Brain and DeepMind. Stay informed, stay curious, and don’t forget to subscribe for more!

Keywords: LLMs, Search Engines, AI Rundown, AI Monthly, AI in September 2023, Future of Search, Language Models, AI developments, AI News, LLM vs. Search

Are you eager to expand your understanding of artificial intelligence? Look no further than the essential book “AI Unraveled: Demystifying Frequently Asked Questions on Artificial Intelligence,” available at Apple, Google, or Amazon today at https://amzn.to/3ZrpkCu

Emerging AI Innovations: September 30th, 2023

Nvidia offices just got raided by french authorities

- French antitrust authorities conducted a surprise raid on NVIDIA, suspecting anticompetitive practices in the graphics cards sector.

- The raid was part of a larger investigation into the cloud computing market, focusing on major players like Amazon Web Services, Google Cloud, and Microsoft Azure.

- The French Competition Authority emphasized that a raid doesn’t indicate guilt, but it is a significant move by the regulator.

Mark Zuckerberg unveils Meta’s new lifelike VR avatars

- Podcaster Lex Fridman conducted what he calls the “first interview in the Metaverse” with Mark Zuckerberg, using their exceptionally realistic VR avatars created through extensive facial scans.

- The 3D avatars, called Codec Avatars, map the user’s facial expressions in real-time and are more bandwidth efficient than transmitting video, according to Zuckerberg.

- Although the currently displayed avatars use a state-of-the-art scanning process, Zuckerberg’s future plan is to generate similar quality avatars using a quick scan from users’ smartphones.

Tesla faces federal lawsuit over racial discrimination allegations

- The US Equal Employment Opportunity Commission (EEOC) has sued Tesla, accusing it of violating the Civil Rights Act by creating a hostile work environment for its Black employees.

- The lawsuit alleges rampant racial harassment including slurs, racial stereotypes, and offensive graffiti at Tesla’s Fremont, California, manufacturing facilities starting from May 2015.

- The EEOC claims Tesla retaliated against Black employees who objected to discriminatory practices, and is seeking damages, reinstatement, and policy changes within Tesla.

Epic Games announces major layoffs and Bandcamp sale

- Fortnite developer Epic Games is laying off 16% of its workforce, affecting approximately 830 employees.

- The layoffs have been attributed to financial instability and major structural change to their economics, despite Fortnite’s growth and efforts to cut costs.

- Severance packages, including six months of base pay plus health care coverage and other benefits, are being offered to those affected by the layoffs.

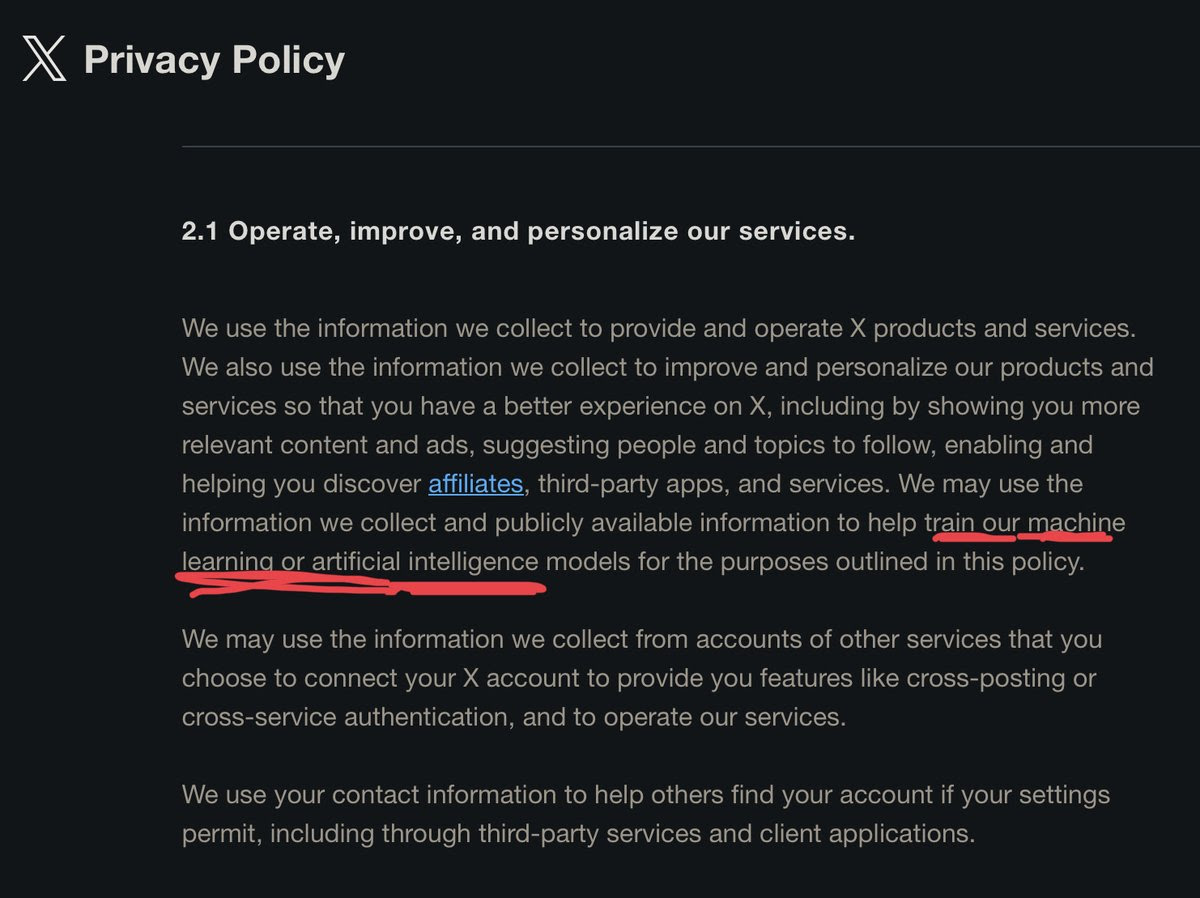

Meta AI: The new ChatGPT rival was trained on your posts

Meta’s new AI assistant, a potential rival to ChatGPT, is being trained using public posts from Facebook and Instagram.

Meta AI: ChatGPT’s Rival

Introduction to Meta AI: Launched at Meta Connect 2023, Meta AI aims to become a prominent assistant across platforms such as Instagram, WhatsApp, and Facebook.

Capabilities: Beyond just providing information like ChatGPT, it will perform tasks across various platforms and is set to integrate with products like the Ray-Ban Meta smart glasses and Quest 3.

Training on Your Posts

Data Source: The unique edge of Meta AI comes from its training on public posts from Facebook and Instagram, essentially learning from users’ informal content or “sh*tposts.”

Respecting Privacy: Meta takes care to not use private posts or messages for training, emphasizing the respect of user privacy.

Other Tech news you might like

Apple petitions Supreme Court to overturn a ruling which forces it to allow third-party payments in its App Store, contesting it’s unconstitutional.

Tim Cook, Apple CEO, asserts that the upcoming Vision Pro headset, fueled by app developers’ creativity, will revolutionize computing, work, communication, and our overall experience – comparing its potential impact to the iPhone’s.

SpaceX’s defense-oriented Starshield bags its first contract from the U.S. Space Force, enhancing satellite internet capacity and military resilience.

Footage from a Serve Robotics food delivery robot helped LA police to arrest and convict two attempted thieves, amid public surveillance concerns.

Steroid-promoting videos are increasing on TikTok, potentially endangering young men’s health and propagating harmful body image ideals, according to the CCDH.

Daniel Ek identifies three personas of Mark Zuckerberg: the young, egotistical founder, the controversial figure through Cambridge Analytica scandal, and the current, more responsible version.

Meta Platforms (META.O) Chief Executive Mark Zuckerberg on Wednesday rolled out new AI products for consumers, including bots that create photo-realistic images and smart glasses that answer questions, as well as an updated virtual-reality headset.

The European Union is examining alleged anticompetitive practices in chips used for artificial intelligence, a market that Nvidia (NVDA.O) dominates, Bloomberg News reported on Friday, citing people familiar with the matter.

Sex robots powered by futuristic AI algorithm will one day give humans the best sex of their lives, it has been sensationally claimed.

National Security Agency Director Army Gen. Paul M. Nakasone today announced the creation of a new entity to oversee the development and integration of artificial intelligence capabilities within U.S. national security systems.[4]

Emerging AI Innovations: September 29th, 2023

Can AI Create Another AI?

If yes, What you guys think, How Far Are we on achieving this? especially A general AI system can design and create another AI system by itself, without any human intervention or guidance.

I am exploring the possibility of self-replicating and self-improving AI systems, which could have profound implications for the future of humanity and civilization.”

I think that the answer is Yes, to a certain extent: AI can be involved in the creation of other AI systems. This process is typically known as automated machine learning (AutoML). AutoML involves using algorithms and computational power to automate the process of training and optimizing machine learning models.

In AutoML, AI systems, often referred to as “meta-learning” algorithms or “AI assistants,” are used to perform tasks like feature engineering, model selection, hyperparameter tuning, and even creating custom architectures. They aim to automate the labor-intensive aspects of machine learning, making it more accessible to a wider audience, including those without extensive expertise in data science.

However, it’s important to note that while AI can assist in the development and optimization of models, it does not possess the creative or conceptual abilities to create entirely novel AI algorithms or architectures from scratch. That level of innovation still relies on human researchers and engineers.

The NSA is establishing an “Artificial Intelligence Security Center”

The NSA is creating a new center focused on promoting secure AI development and defending U.S. advances from foreign adversaries aiming to co-opt the technology. (Source)

The AI Security Center

Aims to help spur the secure integration of AI capabilities.

Will develop best practices and risk management frameworks.

goal is to understand and combat threats to U.S. AI advances.

Motivations

The U.S. currently leads in AI, but the advantage is precarious.

Adversaries have long stolen intellectual property.

Agencies are adopting AI rapidly across missions.

I will work with industry, labs, and academia on priorities.

It comes after an NSA study showed the need to prioritize security.

Must understand AI vulnerabilities and counter-threats.

TL;DR: The NSA is establishing an AI Security Center to promote secure development and adoption of AI while defending U.S. progress from adversaries aiming to exploit the technology.

Google is expanding its AI-powered search experience to teenagers

Google’s AI-driven search experience, Search Generative Experience (SGE), is now accessible to teenagers between 13-17 in America. Entailments include a conversational mode for searches, which Google believes can help youngsters pose atypical questions to dig deeper.

Teen-friendly AI search

SGE introduces a conversational mode to Google Search, allowing users to ask questions and follow-ups in a more natural language.

To prevent harmful content from surfacing, Google has placed guardrails, providing stronger protections related to illegal and age-gated substances, or bullying.

Features and improving AI accuracy

Google is rolling out “About this result” to provide users with more context about the displayed content.

Google acknowledges and addresses any validation of false or offensive claims by the AI-powered response, ensuring to provide higher quality and more accurate responses.

It’s also using large language models to self-critique and rewrite draft responses on sensitive topics based on quality and safety principles.

SGE’s popularity and future plans

Since SGE’s introduction, it has found popularity, especially among younger users who prefer a conversational approach.

Google plans to expand SGE outside the U.S. to India and Japan and improve its services with support for videos, images, local info, and more.

It’s also experimenting with ads positioned next to the AI-generated responses.

Daily AI Update News from AWS, Meta, Google, Spotify, Zapier, Microsoft, Cohere, and Mayo Clinic

AWS has announced 5 major generative AI updates and innovations

Amazon Bedrock is now generally available.

Amazon Titan Embeddings is now generally available.

Meta’s Llama 2 is coming to Amazon Bedrock in the next few weeks.

New Amazon CodeWhisperer capability is coming soon, will allow customers to securely customize CodeWhisperer suggestions using their private code base to unlock new levels of developer productivity.

New generative BI authoring capabilities in Amazon QuickSight to help business analysts easily create and customize visuals using natural-language commands.

Meta introduces LLAMA 2 Long

– In new research, Meta presents a series of long-context LLMs that support effective context windows of up to 32,768 tokens. The model series are built through continual pretraining from Llama 2 with longer training sequences and on a dataset where long texts are upsampled. Notably, the 70B variant can already surpass gpt-3.5-turbo-16k’s overall performance on a suite of long-context tasks. Check out the research for more interesting findings!

Google announces Google-Extended and expands access to SGE

– Google-Extended will let web publishers control access to content on their site for training Google’s AI models. And Google has opened up access to SGE in Search Labs to more people, specifically teens in the U.S., so they too can benefit from generative AI’s helpful capabilities.

Spotify is adding auto-generated transcripts to millions of podcasts

– The transcript feature will expand to more podcasters on Spotify and include time-synced text. In the future, creators could add media to transcripts– a useful feature if a creator is describing an image on the show, for example.

Zapier launches Canvas, an AI-powered flowchart tool

– The tool aims to help its users plan and diagram their business-critical processes with a bit of AI to help them turn those processes into Zapier-based automations. Canvas is now in early access.

Microsoft opens AI Co-Innovation Lab in San Francisco to empower Bay Area startups

– The lab’s main goal is to facilitate the transition from ideation to prototyping, providing companies with the resources and guidance they need to refine their AI-based concepts.

Cohere jumps into the fray of the AI chatbot race by releasing a new API

– The Chat API with Retrieval-Augmented Generation (RAG) will allow third-party developers of other enterprises to build powerful chat applications based off Cohere’s proprietary generative LLM, Command.

Mayo Clinic to deploy and test Microsoft generative AI tools

– Mayo Clinic is among the first healthcare organizations to deploy Microsoft 365 Copilot. It is testing the Early Access Program with hundreds of its clinical staff, doctors, and healthcare workers.

Meta AI Chatbot: Meta CEO Mark Zuckerberg recently introduced a new chatbot assistant called Meta AI. It is designed to compete with OpenAI’s ChatGPT and can provide answers to questions from Microsoft’s Bing search engine. Additionally, it can generate images from text commands. The assistant is powered by Meta’s large language model, Llama 2, and will be available for a limited group of US users on Facebook Messenger, Instagram, and WhatsApp1.

Generative AI Tools: Meta has also launched two generative AI tools for photo editing that will be made available to Instagram users next month. The first tool, called Backdrop, can swap the background of a photo with one generated by a text prompt. The second tool, Restyle, uses generative AI to create artistic effects, such as surrounding a person with puppies1.

Celebrity Chatbots: Meta has introduced a collection of chatbots based on approximately 30 celebrities, including tennis star Naomi Osaka and former football player Tom Brady. These chatbots are available in beta on Facebook Messenger, Instagram, and WhatsApp. For example, there is a chatbot based on Paris Hilton playing a mystery-solving detective and another based on Snoop Dogg as a dungeon master1.

Swiss Army Llama: Do tons of useful stuff with local LLMs with a REST API

GitHub Repo: https://github.com/Dicklesworthstone/swiss_army_llama

This project originally started out with a focus on easily generating embeddings from Llama2 and other llama_cpp (gguf) models and storing them in a database, all exposed via a convenient REST api. But since then, I’ve added a lot more functionality:

New endpoint for generating text completions (including specifying custom grammars, like JSON).

Get all the embeddings for an entire document– can be any kind of document (plaintext, PDFs, .doc/.docx, etc.) and it will do OCR on PDFs and images.

Submit an audio file (wav/mp3) and it uses whisper to transcribe it into text, then gets the embeddings for the text (after combining the transcription segments into complete sentences).

Integrates with my new vector similarity library (`pip install fast_vector_similarity`) to provide an “advanced” semantic search endpoint. This uses a 2-step process: first it uses FAISS to quickly narrow down the set of stored embeddings using cosine similarity, then it uses my vector similarity library to compute a bunch of more sophisticated (and computationally intensive) measures for the final ranking.

As a result, I changed the project name to Swiss Army Llama to reflect the new project goal: to be a one stop shop for all your local LLM needs so you can easily integrate this technology in your programming projects. As I think of more useful endpoints to add (I constantly get new feature ideas from my own separate projects– whenever I want to do something that isn’t covered yet, I add a new endpoint or option), I will continue growing the scope of the project. So let me know if there is some functionality that you think would be generally useful, or at least extremely useful for you!

A big part of what makes this project useful to me is the FastAPI backbone. Nothing beats a simple REST API with a well-documented Swagger page for ease and familiarity, especially for developers who aren’t familiar with LLMs. You can set this up in 1 minute on a fresh box using the docker TLDR commands, come back in 15 minutes, and it’s all set up with downloaded models and ready to do inference or get embeddings. It also lets you distribute the various pieces of your application on different machines connected over the internet.

Emerging AI Innovations: September 28th, 2023

Will LLMs replace Search?

They will evolve and blend together.

– search offers freshness, relevancy, comprehensiveness – all gained in more than 29 years of industrial research[1]

– large models offer phenomenal language understanding and generation, but sometimes lack verifiability, attributions, and relevancy – not surprisingly given they have been around for 6 years[2]

In my view, we will see a rise of Language Search Models (shortly LSMs) where the best of the two words will emerge

[1] The Anatomy of a Large-Scale Hypertextual Web Search Engine, 1998, Google

[2] Attention Is All You Need, 2017, Google

Meta’s new exciting AI experiences & tools

- Meta’s new AI features include an AI Assistant powered by Bing, It will provide real-time information and generate photorealistic images from text prompts. Meta used specialized datasets to train the AI to respond in a conversational and friendly tone. The first extension of the AI Assistant will be web search. The AI Assistant will be available in beta on WhatsApp, Messenger, and Instagram.

- Introduced 28 AI personality chatbots based on celebrities, such as Tom Brady, Naomi Osaka, Mr. Beast, and more. These chatbots, accessible on platforms like WhatsApp, Messenger, and Instagram, provide topic-specific conversations but are currently text-based, with plans to introduce audio capabilities. These AI personalities were created using Llama 2. Meta aims to integrate Bing search functionality in the future. The chatbots’ animations are generated through AI techniques, offering a cohesive visual experience.

- Launching AI Studio, a platform allowing businesses to build AI chatbots for Facebook, Instagram, and Messenger, initially focusing on Messenger for e-commerce and customer support apps. This toolkit will be available in alpha.

- Gen AI stickers powered by Emu allow users to create unique stickers across its messaging apps. Users can type in their desired image descriptions, and Emu generates multiple sticker options in just a few seconds. Initially available to English-language users, this feature will roll out over the next month.

- Introducing 2 new AI Instagram features, restyle and backdrop. Restyle allows users to transform the visual styles of their images by entering prompts like “watercolor” or more. While backdrop changes the background of photos using prompts.

- Launches New-gen Ray-Ban smart glasses, in partnership with EssilorLuxottica, will feature improved audio and cameras, over 150 different custom frame and lens combinations. They’re lighter and more comfortable. Will enable livestream to Facebook or Instagram and use “Hey Meta” to engage with Meta AI assistant by voice.

Why does this matter?

Meta’s this move leads the race with technology heat-up. Its new AI-driven experiences and tools will enhance user interactions to the next level and open up numerous possibilities in communication and content creation.

Jony Ive and OpenAI’s plan to build the ‘iPhone of artificial intelligence’

Apple’s former chief design officer, Jony Ive, is reportedly in discussions with OpenAI to build the “iPhone of artificial intelligence,” aided by over $1 billion in funding from Softbank CEO Masayoshi Son.

If you want to stay ahead of the curve in AI and tech, look here first.

Design and Interaction

Design partnership with LoveFrom: OpenAI’s CEO, Sam Altman, plans to leverage Jony Ive’s design firm, LoveFrom, to develop OpenAI’s debut consumer device.

Aiming for natural interaction: Ive and Altman are focused on creating a device that offers a user experience mirroring the intuitiveness of the original iPhone’s touchscreen for AI interactions.

Influence and Funding

Inspired by iPhone’s success: The new device draws inspiration from the original iPhone, highlighting the transformative impact of touchscreen technology.

Softbank’s financial backing: Masayoshi Son is not only providing over $1 billion in funding but has also suggested involving chip design company Arm in the project.

Focus on Healthy Tech Consumption

Ive’s concerns on smartphone addiction: Jony Ive has historically voiced concerns over the addictive nature of smartphones.

Vision for screenless computing: The collaboration with OpenAI presents an opportunity for Ive to work on an interactive device less dependent on screens.

OpenAI links ChatGPT with Internet

ChatGPT is back with internet browsing, It can now browse the internet to provide current & reliable information, along with direct links to sources. This update addresses feedback received since the browsing feature was launched in May. The model now follows robots.txt and identifies user agents to respect website preferences.

Currently available to Plus and Enterprise users, browsing will be expanded to all users soon.

To try it out, enable Browse in your beta features setting:

Click on ‘Profile & Settings’ > Select ‘Beta features’ > Toggle on ‘Browse with Bing’ > Choose Browse with Bing in the selector under GPT-4.

Why does this matter?

Users can now access up-to-date and trustworthy information with direct source links, making it a valuable tool for research, problem-solving, and knowledge acquisition. Also, they’re trying to make it a valuable resource for a broader audience.

The difference between AI creativity and human creativity, and how it is rapidly narrowing.

While many consider human creativity to be truly original and superior in results, it appears boundaries between AI-generated content and human creativity are becoming increasingly blurred. And it’s looking increasingly likely that AI may soon be at par with humans in creative content generation. Let’s look at a quick comparison between humans and ChatGPT to understand this:

Definition

Creativity in the context of content creation is the ability to produce original and innovative content that is engaging, informative, and valuable to the target audience. By this definition, both human and AI can create new content by logically combining existing knowledge based on the unique context and purpose. Both sources have also been verified to create valuable, informative, and helpful content.

However, humans may be more capable of abstract thinking that breaks free from typical creative patterns that AI is trained on. This is because they are influenced by diverse factors (like bias, flawed memory, multiple senses, emotions and more), apart from past knowledge and logic. As AI advances to better multimodal and experential learning, this difference may reduce greatly.

Basis of Creativity:

Humans- Our creativity often stems from a combination of stored knowledge, experiences, and innate cognitive processes. We remix, reframe, and reinterpret what we know to create something new.

GPT & AI- Similarly, AI models like GPT generate content based on patterns in their training data. They can combine and restructure this information in novel ways.

Initiation:

Notably, AI also lacks spontaneous initiation and requires prompting by humans as it does not have personal objectives or desires. The output quality largely depends on the input and can be manipulated easily, unlike other humans. This passive and dependent basis makes AI appear less creative, but that may just be because it is designed to depend on and adjust to human inputs.

Influence of Emotions and Identity:

Humans- Emotions and personal identity play a significant role in many creative endeavors. They influence tone, nuance, and depth in storytelling, poetry, and even in some journalistic pieces.

AI- AI can copy these influences easily based on patterns in the input data! It may not genuinely “feel” or “experience” emotions or identity, but that hardly makes a difference in the final output as long as it can recreate the influence of emotions.

Multimodal Learning and Experience:

Humans- Humans learn from their unique experiences due to their distinct life situations, and they use multiple senses to gain unique and holistic knowledge i.e. multimodal learning. ChatGPT can not do this as of now.

AI- Advancements in AI and IoT are leading to models that can process and generate content across multiple modalities (text, image, audio, video). This brings AI closer to the way humans perceive and interact with the world, allowing it to form unique multimodal experiences that it can apply to content creation.

Dynamic Learning and Memory:

Humans- Human memories are temporary and flawed as they fade or even vary over time. We also continuously learn, adapt, and change our perspectives based on new experiences and knowledge. We also perform research for new information. So, the creativity of an individual will naturally vary over time.

AI- Current models like GPT have static and permanent knowledge once trained. However, future models might incorporate dynamic learning, allowing them to adapt over time without explicit retraining. (covered in the previous point). This is already partly applicable as LLMs are being integrated with search engines and use researched online data.

Ethical Considerations:

Humans- Human creativity is often bound by ethical considerations, cultural sensitivities, and a sense of responsibility. Humans also understand the context in detail with higher accuracy due to multimodal experiences and personal understanding of the audience’s perspective (empathy).

AI- AI can be programmed to follow certain ethical guidelines, but the nuances and complexities of human ethics and an understanding of the audience’s perspective can be challenging to encapsulate and replicate fully. This may cause harmful outputs or restrict the creative abilities of the LLM.

Output quality:

Humans- It all comes down to the quality of creative outputs, and a skilled human writer with good language, research/knowledge, and reasoning skills can make highly creative outputs while maintaining accuracy and moderation. There is still the chance for human error or personal bias, which can possibly be corrected by the same writer through review and introspection.

AI- AI can also craft very creative and accurate outputs through existing knowledge or online research and typically tends to avoid bias or controversial outputs. However, there are cases where it may hallucinate and create false/harmful outputs or refuse to provide harmless outputs unless proper context and knowledge are manually fed. Since it cannot understand the reader’s perspective or user’s intent directly and does not have an understanding that the data it is consulting may be false, these errors will likely continue.

In the future, as AI becomes more advanced and incorporates more dynamic and multimodal learning as well as a better understanding of the audience, the gap between AI-generated content and human creativity might narrow further. What do you think?

Whose societal worth will AI hurt the most?

Now that we know AI’s capabilities by rigorously testing chatgpt4 … I’ve been pondering how it will impact the future. Let’s be real, it won’t replace everyone. If you got by in life by being good looking, AI won’t replace you. I don’t care how hot that silicone AI bot will be; a hot human will always be preferable. Who will it replace? I think the nerd … that person who struggled in highschool but who found their way in the world by getting a job that required brainpower and paid well. Not amazingly well, but like a few hundred k.